Recently in this series we have been looking at the historical documentation of supernatural claims. For our next question, we will examine whether these events could have occurred naturally:

7. What are the odds that the purported supernatural events could have occurred for non-supernatural reasons?

From a Bayesian perspective, the probability of a religion is basically given by the prior probability times the evidence for it, and the only thing that counts as evidence for a religion is an observation which is more likely to happen if the religion is true, then if it is false. So a religion cannot be strongly confirmed by a (seeming) miracle, unless that miracle is unlikely to happen if the religion is false.

Plausible Naturalistic Explanations

Many purported "miracles" have perfectly plausible explanations in terms of known natural processes.

For example, I'm not particularly impressed by weeping statues or milk-drinking idols, since they can be easily explained by capillary action. These sorts of phenomena are not hard to produce by fraud and/or accident, and shouldn't count as any sort of demonstration of the supernatural. A similar statement applies to any guru or holy man who does miracles that are similar to the sorts of things a stage magician does by slight-of-hand, e.g. causing small objects to appear in one's hand (especially if, like the supposedly divine Sathya Sai Baba, one is occasionally caught blatantly cheating...)

As far as I know I was raised in the only household in America which subscribes to both Christianity Today and the Skeptical Inquirer. If the good folks at the Inquirer can refute your Bigfoot sighting or paranormal abilities by their critical investigations, then probably you should pay attention to that. (However, along with their high quality empirical investigations showing how various paranormal phenomena can be faked, you also get a bunch of tendentious articles about how philosophy of science proves religious people are ignoramuses, ranging along a whole axis of philosophical sophistication. In any given issue you usually get articles of both sorts from different people.)

Implausible Naturalistic Explanations

Other types of miracle stories involve claimed events for which there is basically no reasonable natural explanation. In such cases, the only reasonable options are: either to postulate something supernatural, or else to deny that the claimed event actually happened as stated. (The difficulty of the latter path obviously depends on the degree to which there is high quality historical documentation of the event.)

To be clear, you can always come up with a naturalistic explanation for any event, if you try hard enough and don't care how implausible your explanation is. But sometimes these naturalistic explanations remind me a bit of the classic TV cartoon Scooby-Doo. The original incarnation of the show featured some amateur detectives who investigate paranormal phenomena. However, they invariably turn out to actually be hoaxes, contrived by the machinations of some villain.

Sometimes, the naturalistic explanation given is far more contrived and implausible than it would be for the supernatural entity to actually exist! For example, in one episode, at first you think that a certain character Lisa has been turned into a vampire, but it turns out that all that's actually going on is that somebody hypnotized Lisa so that, whenever she answers the phone and hears a bell ringing, she puts on a false set of vampire teeth and pretends to be a vampire!

In other words, so long as an explanation belongs to the realm of Victorian sensationalized psychology, rather than the realm of Victorian Gothic thrillers, we can accept it as the final explanation for what happened—even if that explanation is completely implausible for anyone with a superficial understanding of human behavior.

(In this style of thinking, the Demarcation Problem apparently reduces to a question of genre classification. A similar thought pattern can be seen in those who believe that the multiverse or simulation hypothesis are science fiction and therefore potentially credible, whereas angels and ghosts and miracles are fantasy and therefore not potentially credible. But thematic flavoring is not the test of truth. UFO cults base their mythology around science fiction tropes, but can be seen to engage in many of the same patterns of pathological reasoning that similar religious-themed cults engage in. And while there is a certainly a nonzero amount of claimed observational evidence for angels, ghosts, and miracles, as far as I know nobody has ever claimed to have met the aliens who are simulating our universe in their computer.)

Rationalized Miracles

Sometimes, the proponents of "scientific" explanations for miracles are actually religious people, who believe they are supporting the biblical narrative rather than undermining it. As if finding ways that the "miracles" might have happened without appealing to God would somehow allow people to find religion believable again. But this seems quite silly to me. If the "miracle" has a fully natural explanation, which doesn't point to anything outside of the universe, then by definition it is no longer a miracle in the religious sense of the word.

Some theists of a rationalistic bent seem to think it is somehow better if God never strictly suspends the laws of physics we moderns know and love, but only does miracles which are technically naturally possible (yet are still extremely improbable from a naturalistic perspective). This approach would make all miracles special cases of God's providential control of ordinary natural processes. And indeed, in quantum mechanics, a lot of things which we think of as impossible, such as an object passing through a wall, are technically possible (albeit with an exceedingly minuscule probability, in the case of macroscopic objects).

But this seems to defeat the point of having a quantum theory in the first place. The reason why we regard QM models as predictive, is that they still allows us to make statistical predictions about what will happen. So if you want to postulate an extreme violation of these rules of probability, it seems to me that this violates the laws of QM, and is therefore just as much a suspension of the usual laws of physics as e.g. violating electric charge conservation would be.

Also, this kind of "Religious Naturalism" (to coin a phrase) doesn't seem to fit very well with events like appearances of angels, or the Ascension of Jesus into Heaven. Biblical events like these, if you accept them, cannot be understood merely as improbable events taking place within our own universe, but imply the existence of other worlds, containing new types of entities. If such events really happened then I don't see how to make sense of them, except on the hypothesis that our physical universe is not a closed system! In addition to the physical universe, there has to be some sort of spiritual realm, so to speak, where the angels and the departed saints and possibly other things dwell. Given the limitations of human thought, we can only visualize this as a kind of "place", even if it transcends our own spacetime. But however we imagine it, it means that God's actions cannot be thought of as limited to merely the physics of our own universe.

This is not necessarily a problem if you believe, as I do, that our physics models are just an approximate description of a limited aspect of reality, and that a full description of reality would require discussing the actions of various supernatural agents. But, if there are indeed forms of reality outside of our model, then why not just admit that the model is inapplicable in certain situations, where these other realities become important?

* * *

Case Study #1: Miracles of the Exodus

To give an obvious example of miracles for which the proposed naturalistic explanations are rather obviously futile, consider the Ten Plagues, the Crossing of the Red Sea, the Manna from Heaven, and the other miracles described in the Book of Exodus. I am not aware of anything else in ancient literature that is remotely parallel to these dramatic miracles, among texts which were intended to be taken as serious history rather than as entertainment.

It seems obviously futile to try to explain these miracles in purely natural terms.

(Of course, even on a supernaturalist interpretation of these miracles, there might still be some natural causes involved in performance of the miracle. For example, before the Israelites crossed the Sea, Exodus 14:21 states that "the Lord drove the sea back with a strong east wind and turned it into dry land"; in other words, the wind was God's physical instrument for driving away the water. But that just raises the question of how a wind powerful enough to create "walls of water on either side" came along in the first place. The important question here is not whether physical causality was involved at any stage of the process—the entire point of doing a miracle in the physical world, is that it leads to some tangible physical consequences—but whether it is plausible that the event could have originated from only natural causes, without those natural causes being diverted from their usual course by some special supernatural exception to the usual rules governing the physical universe.)

Yet there is still a misguided intellectual parlor game which tries to explain these grand miracles using only natural causes. The most notorious contender was the arch-crackpot Immanuel Velikovsky, who tried to explain several miracles in the Bible by proposing near misses with other planetary bodies in the solar system, but less insane versions of such conjectures keep being rolled out by naturalists of various sorts.

Most of these attempted naturalistic explanations aren't really very scientifically plausible in the first place. But there is a deeper problem with this project. Such naturalistic explanations utterly fail to explain the extraordinary degree of coincidental timing that is required.

For example, let's suppose for the sake of argument that we've found an explanation for why some body of water (whether or not it was the same as the traditionally understood Red Sea location) might have drained very quickly, leaving a dry path for the Israelites to walk through. The fact that this—presumably extremely rare—event happened just as Moses was leading an escaping band of slaves from the Egyptians, and that the unusual phenomenon ended just in time to kill the Egyptian army, is one helluva coincidence!

Taking into account further coincidences, such as these Ten Plagues arising (and ceasing) in ways coordinated with Moses and Aaron's threats to Pharaoh, a plague which only targets firstborn sons (but not the Israelites who celebrated the Passover) and the description of the manna as only appearing 6 days a week (skipping the Sabbath Day so that the Israelites could rest)—it is clear that no purely naturalist explanation can serve as a plausible explanation for such phenomena.

Of course the proponents of such naturalistic theories are free to suppose that textual details such as these are later embellishments of the story. But for the proponent of a specific naturalistic explanation for a miracle, this move is rather problematic. A scientific explanation requires there to be some data supporting it, after all. So this sort of skeptic tends to adopt a weirdly deferential reading of the text, hunting for clues that identify one particular natural phenomenon. "If my scientific hypothesis is correct", they say, "It makes sense that the plagues should have occurred in this exact order, and lasted exactly this length of time, and look how well this particular Hebrew word describes this natural phenomenon!" But to take those parts of the text hyper-literalistically and dogmatically, while at the same time proposing that all the other inconvenient bits (whatever doesn't fit your theory) are later legends, seems like special pleading. So the methodology here isn't especially coherent.

If you wish to deny that the Exodus was supernatural, there is a much more sensible and obvious strategy, which is to simply declare that the whole event is legendary. (Or, if there was a historical core event, that it has been buried under so many layers of legend that it is impossible for modern scholars to reconstruct what really happened.)

Yes, it's a bit strange that Jewish priests should have invented a national epic that portrayed the Israelites as hapless and cowardly slaves rescued by unprecedented miracles. Nevertheless, that's nothing like so improbable as accepting the historicity of the Torah yet denying the hand of God in the process!

Thus, while the miracles of the Exodus score very well on this particular criterion, they are unfortunately too far in the past (and insufficiently corroborated) to clearly belong to the realm of history, rather than mythology. This does not necessarily make a Jew or Christian irrational for believing that the events occurred, but it must be in the context of a broader worldview, and not because the historical proofs for it are overwhelming, considered in themselves.

We will therefore next consider some more recent historically documented miracles, particularly focussing on two important miracle claims with particularly good source documentation: namely 1) the Splitting of the Moon, and 2) the Resurrection of Jesus. The testimonial chains for these events were the subject of the last installment; in this post we will ask whether—assuming, as we have already argued for, that these claims do go back to the testimony of those who claimed to be eyewitnesses—it is plausible that they could have happened naturally.

In each case, I will also compare these miracles to other, arguably parallel events, in order to make sure that the events in question are truly unique.

Case Study #2: The Splitting of the Moon

As previously discussed, the "Splitting of the Moon" is seemingly briefly mentioned by the Quran, and is also reported in the Hadith, via multiple chains of transmission going back to four of the original Companions of Mohammad. According to these reports, in order to provide a miraculous sign, God split the moon into two pieces, which temporarily moved to different sides of a mountain.

Let's look at the primary sources. Anas bin Malik narrated:

“The people of Mecca asked Allah's Messenger to show them a miracle. So he showed them the moon split in two halves between which they saw the Hira' mountain.” (Sahih Al Bukhari)

Abdullah Ibn Masud narrated:

“During the lifetime of Allah’s Messenger, the moon was split into two parts; one part remained over the mountain, and the other part went beyond the mountain. On that, Allah’s Messenger said, `Witness this miracle.'” (Sahih Al Bukhari)

Ibn 'Abbas narrated:

"The moon was split into two parts during the lifetime of the Prophet." (Sahih Al Bukhari)

Finally, from Jubayr ibn Mut'im we have the following:

"The moon was split into two pieces during the time of Allah's Prophet; a part of the moon was over one mountain, and another part over another mountain. So they said: `Muhammad has taken us by his magic'. They then said: `If he was able to take us by magic, he will not be able to do so with all people.' "

I should pause here and say that these are not excerpts from more elaborate descriptions of the miracle. To the best of my ability to tell from the English translations available on the Internet, what I have just quoted is the entire text (apart from the chain of narrators) of the four hadiths which form the root of the tree of testimony about this miracle. (In some cases, when the hadith was transmitted by multiple routes, there are minor textual variants, and in such cases I have attempted to select the more elaborate version.)

(I did find a more elaborate narrative version online; but as far as I can tell, it is not a primary source, but rather a composite stitched together from many hadith, including what I think must be information from later, secondary sources. I am therefore going to disregard this version, although I am open to correction on this point from any experts in this area.)

As far as can be told from the authentic hadith, it seems that nobody else outside Mecca noticed this event. Two of the Companions do indicate that the pagans in Mecca also witnessed the miracle, but nobody claims that they came to believe in Islam as a result.

It is of course quite obvious that no ordinary natural power would have been capable of actually physically splitting the moon in two and then bringing it back together again. This is indeed, the strongest aspect of the miracle, since manipulating heavenly bodies would be quite out of the reach of a human impostor.

At the same time, there is no astrophysical evidence of such a disruptive event. Of course God could have miraculously healed the cracks and cleaned up any other inconvenient astronomical evidence of the event happening, but this is a little bit like saying he could have left dinosaur bones in the Earth to trick us into thinking evolution happened. It seems deceptive. If you want to say that a miracle actually affected the physical world, I think it should leave behind at least some messy physical evidence suggesting it actually happened.

(Some Muslims use misleading photographs to claim that the Rima Ariadaeus trench is evidence left over from the Splitting of the Moon, but this trench is only about 300 km long so it doesn't go nearly all the way around, and it is similar to many other trenches on the Moon. It is also unclear why, if God was deleting nearly all of the physical evidence for this event, he would leave this one little crack behind to prove his work.)

It is true that, depending on the nature of a miracle, it might not leave enough physical evidence behind to convince skeptics. But when the miracle is such that it ought to have left some evidence behind and didn't, then even I, who already believe in God, become skeptical. In other words, the splitting of the Moon is actually 2 miracles, the 1st being to divide the Moon, and the 2nd being to rejoin it in such a manner as to erase all physical effects of the 1st miracle (apart from any changes in the brains of those who supposedly saw it).

Hence, it appears that the sole purpose of such a miracle would be to serve as a temporary sign to instill belief, not to actually accomplish any lasting physical purpose. (In this respect it is quite unlike the Exodus miracles, which rescued Israel from slavery and provided for her in the wilderness; nor is it like the Resurrection of Jesus which rescued Christ from death, and points forward to humanity being likewise rescued from death.) Splitting the Moon does indeed suggest an extreme degree of power, and perhaps a certain degree of capriciousness. But considered in itself, the miracle is sterile and does not lead to any further consequences.

Because of this lack of physical evidence left behind, there are other ways of interpreting the miracle besides the moon literally splitting into two physical pieces and then rejoining. For example, God might have merely miraculously caused the appearance of the moon being split in two.

To my mind, that is a more reasonable interpretation of the event. (Similarly, I would rather not explain the biblical miracle of Joshua commanding the Sun and Moon to stand still as a miracle which affected orbits in the solar system, but rather as a local event which affected the perceptions of those in the valley of Ayalon.)

Even taken in this sense, the Splitting of the Moon is definitely one of the more impressive non-Christian miracles, assuming the reports are accurate. A sign in the heavens would be very difficult to fake with any kind of slight-of-hand trick.

Possible Parallels: More Signs in the Heavens

On the other hand, it doesn't seem totally crazy to say that people may have witnessed some real atmospheric or visual effect, which was then misinterpreted by people eager to believe that they had seen a sign. There are many other instances of groups of religious people temporarily seeing strange things in the sky, when primed to do so by expectation. Or to take an example less related to traditional religions—but still conducive to fanaticism—there are lots of people who claim to have sighted UFO's in the sky.

As a general rule, I don't think such people are lying about having seen something; I would instead question their interpretation of what they saw, since merely seeing a collection of lights moving around in the sky that you can't identify, doesn't necessarily mean that it is actually an alien spacecraft. As someone once said, I have no objection when people claim to have seen unidentified flying objects, it's only when people try to identify them with conspiracy theories about alien invaders, that skepticism is warranted.

Another possible parallel is the very heavily witnessed Roman Catholic Miracle of the Sun, supposedly seen by thousands of people—though different people saw different things, and some people saw nothing. A large crowd had been gathered together and told that they would see a miracle if they looked at the Sun, and many of the people there saw some odd visual effects when they did so.

But as we were all warned before the recent total eclipse, it is quite dangerous to stare at the sun for any extended period of time, since doing so can damage the retina. It is not really particularly surprising that the participants saw a variety of dazzling visual phenomena after doing this foolish thing, and then interpreted it as confirmation of the miracle they were already expecting.

(Obviously, the Moon is less likely to cause these kinds of dazzling effects, so this is not a perfect parallel to the Islamic claim, but it does illustrate the way in which crowds primed to accept a miracle can interpret any strange thing they see in the sky as a confirmation of their beliefs.)

A solar miracle of a somewhat different kind is reported in the Gospels, which state that the Sun was darkened for the final three hours that Jesus hung on the Cross. In addition to the Gospels, this miracle appears to have been reported by two different non-Christian historians (although these works are now lost so we know about them only because of Christian commentary). It might be possible to explain this strange Darkness by natural causes (although due to the timing of Passover it cannot have been a normal solar eclipse). However, one should take into account the fact that, if the cause were natural, there would have been no reason for its timing to match up with the death of Jesus.

(Like the Resurrection, this miracle is at cross-purposes with the Quran which, as we have already discussed, states that Jesus was not actually crucified.)

These parallels, together with the sparseness of how the miracle was actually described, make me rather uncertain how much evidence a miracle like this really provides. There is no question in my mind that it counts as some significant evidence for the truth Islam, but it seems to me that the evidence described in the next section will surpass it.

Case Study #3: The Resurrection of Jesus

The Gospels and Acts together record about a dozen different examples of Jesus appearing to people after he had died. The following passage taken from the 24th chapter of the Gospel of St. Luke describes three of these events:

[To 2 disciples on the Road:] Now that same day two of them were going to a village called Emmaus, about seven miles from Jerusalem. They were talking with each other about everything that had happened. As they talked and discussed these things with each other, Jesus himself came up and walked along with them; but they were kept from recognizing him.

He asked them, “What are you discussing together as you walk along?”

They stood still, their faces downcast. One of them, named Cleopas, asked him, “Are you the only one visiting Jerusalem who does not know the things that have happened there in these days?”

“What things?” he asked.

“About Jesus of Nazareth,” they replied. “He was a prophet, powerful in word and deed before God and all the people. The chief priests and our rulers handed him over to be sentenced to death, and they crucified him; but we had hoped that he was the one who was going to redeem Israel. And what is more, it is the third day since all this took place. In addition, some of our women amazed us. They went to the tomb early this morning but didn’t find his body. They came and told us that they had seen a vision of angels, who said he was alive. Then some of our companions went to the tomb and found it just as the women had said, but they did not see Jesus.”

He said to them, “How foolish you are, and how slow to believe all that the prophets have spoken! Did not the Messiah have to suffer these things and then enter his glory?” And beginning with Moses and all the Prophets, he explained to them what was said in all the Scriptures concerning himself.

As they approached the village to which they were going, Jesus continued on as if he were going farther. But they urged him strongly, “Stay with us, for it is nearly evening; the day is almost over.” So he went in to stay with them.

When he was at the table with them, he took bread, gave thanks, broke it and began to give it to them. Then their eyes were opened and they recognized him, and he disappeared from their sight. They asked each other, “Were not our hearts burning within us while he talked with us on the road and opened the Scriptures to us?”

[To St. Peter individually:] They got up and returned at once to Jerusalem. There they found the Eleven and those with them, assembled together and saying, “It is true! The Lord has risen and has appeared to Simon.” Then the two told what had happened on the way, and how Jesus was recognized by them when he broke the bread.

[To the larger group of disciples:] While they were still talking about this, Jesus himself stood among them and said to them, “Peace be with you.”

They were startled and frightened, thinking they saw a ghost. He said to them, “Why are you troubled, and why do doubts rise in your minds? Look at my hands and my feet. It is I myself! Touch me and see; a ghost does not have flesh and bones, as you see I have.”

When he had said this, he showed them his hands and feet. And while they still did not believe it because of joy and amazement, he asked them, “Do you have anything here to eat?” They gave him a piece of broiled fish, and he took it and ate it in their presence.

He said to them, “This is what I told you while I was still with you: Everything must be fulfilled that is written about me in the Law of Moses, the Prophets and the Psalms.”

Then he opened their minds so they could understand the Scriptures. He told them, “This is what is written: The Messiah will suffer and rise from the dead on the third day, and repentance for the forgiveness of sins will be preached in his name to all nations, beginning at Jerusalem. You are witnesses of these things. I am going to send you what my Father has promised; but stay in the city until you have been clothed with power from on high.”

Several aspects of these Resurrection appearances are worth mentioning (most of which are apparent from the passage above, but I am interpreting them within the broader context of the other New Testament accounts):

1) It involved multiple events, in which Jesus appeared to different overlapping groups of people, or sometimes to individual persons.

2) Many aspects of the experience were quite strange, and don't fit anyone's preconceptions about how bodies and spirits work. Jesus appears and disappears instantly, and yet he is at pains to prove to his disciples that he is not a ghost. He can be recognized, yet some people seem to have difficulty doing so at first.

3) In order to release the disciples from their doubts about whether he was really alive, Jesus allowed himself to interact with the world according to at least 4 of the 5 sense modalities:

SIGHT - Jesus was visible to all of them at the same time, and showed them his limbs (these are mentioned specifically, presumably because that is where the crucifixion wounds were).

HEARING - Jesus spoke audibly to all of them at the same time.

TOUCH - Jesus allowed them to touch him in order to feel his flesh and bones.

TASTE - Jesus shared several common meals with the disciples, including events where he ate food, and at least one event where he cooked the food himself and gave it to the disciples.

4) Each of these experiences were prolonged over a considerable time, long enough to allow for extended conversations, in which Jesus was able to give them significant instruction about how his suffering fulfilled the Old Testament prophecies, and what they should do next.

and of course we mustn't forget the first event on Easter Day:

5) Jesus' tomb was found mysteriously empty, with the stone rolled away and the body missing, with the women reporting a message from angels that Jesus rose from the dead.

Assuming these events happened as stated, it is really quite hard to come up with any reasonable explanations for how such a large group of people could have been deceived in all of these ways simultaneously. Only if we throw out half of the reported data, can explanations such as group hallucinations even be considered; even apart from the antecedent improbability of such a hallucination affecting a dozen people at the same time.

And yet, on the other hand, after the Resurrection, Jesus' body was also capable of instantly appearing and disappearing, things which aren't consistent with any of the more eccentric skeptical theories (such as Identical Twins or the Swoon Theory) in which the disciples saw an actual living person.

The only simple naturalistic explanation, which doesn't require the conjunction of multiple weird things happening separately, is that the Resurrection was a lie promulgated by the earliest disciples. Indeed, this is the earliest known contemporary rebuttal ("the disciples stole the body", as relayed in Matt 27:64, 28:13).

A Possible Parallel: "Seeing the Rebbe"

In order to check whether there are parallel events to this event, it is instructive to consider the case of a much more recent Jewish rabbi who inspired a Messianic movement.

Rabbi Menachem Mendel Schneerson was an extremely pious Jew with a reputation for great seriousness and holiness, and under his leadership he developed the nearly extinct Chabad (or Lubavitch) movement into a worldwide Jewish revival movement (here is a balanced discussion of his ideals). As a result, there was much speculation that he would be the Messiah, despite the fact that (unlike Jesus) he seemingly never made this claim himself, nor approved of it being explicitly said in his presence.

However, the most relevant aspect to the present discussion, is the reports from people who say they saw him after his death. There is in fact an entire website, Seeing the Rebbe, dedicated to collecting these claims.

The parallels to (certain aspects of) the early Jesus movement are striking. But, when it comes to evaluating the strength of an evidential case, the details matter. A closer inspection of these claims shows that there are also some pretty key dissimilarities to the Gospels, which make it much easier to explain the Lubuvitch rabbi's "appearances" without recourse to the supernatural.

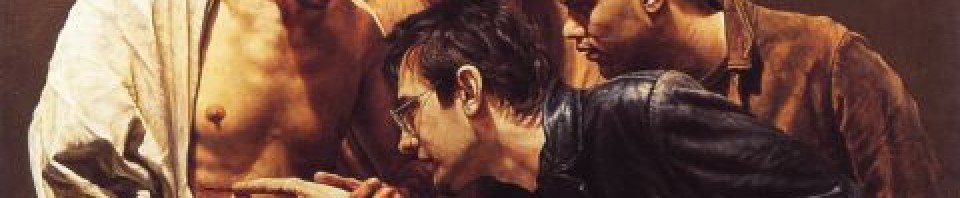

The first story on the website is quite different from the others. In this case alone, a photograph is shown in which, it is claimed, the rebbe has mysteriously appeared in the photograph of a child, taken with a disposable camera. There is a very sharply defined man with a Jewish hat in the photo. The image is not at all like the ghostly apparitions which normally appear in claims of spirit photography; rather it is very clearly the image of an actual physical person. But the back of the man is turned. Apart from his clothing, only his neck and ears (and what might be a small portion of his beard) are visible. From the picture, he could easily be almost any Orthodox man (although the lightish color of the hair suggests someone elderly). This is hardly sufficient information to make a definitive identification. To explain this event naturally, the only mistake required is that the child didn't recall the man being there when the picture was taken. Since the photograph was developed days later, after the child returned from his trip, this memory lapse is hardly surprising.

In all the rest of the eleven accounts, the rebbe is perceived by a human, not in a photograph. The rebbe is suddenly visible, during a religious ritual, or while visiting a place associated with him. Each appearance is quite brief, lasting just a few seconds, or half a minute at most. Usually, only a single person sees the rebbe in any given story, while the others in the room don't notice him at all; however, in two of the stories another person confirms seeing him (among a larger group of people that don't). Some of the stories provide minor circumstantial coincidinces that seem to confirm the experience, but none of it is extraordinarily implausible. One of the persons was skeptical beforehand, but was still present at a ritual intended to "to greet the Rebbe with song and melody".

These experiences were almost exclusively visual, although in a single case the rabbe also speaks a brief sentence: “Don’t worry, the financial situation of your parents will be okay.” (This was part of a vision a yeshiva student had immediately upon waking up from sleep, a time when dream-like hallucinations are particuarly common.)

Nobody claims he shared a Shabbat luncheon with them, or that he got into an extended argument about the Talmud.

Given this data, it does not seem improbable to me to claim that all of these experiences (except the one involving the camera) were hallcuinations of one sort or another.

(By the way, this would not automatically prove that the experiences have no spiritual value, or even a supernatural cause. Nothing I believe is inconsistent with the claim that a holy Jewish leader might be allowed by the Almighty to appear to members of his religious movement, in visions after his death, in order to encourage them. Although the Lubavitch idiom that they "merited to see him" bothers me from a Christian spiritual framework, in which God reaches out to us by grace, not because we somehow earned it.)

Contrary to popular belief, minor hallucinations are not at all uncommon, even among people who are perfectly sane. For example, around half of widowed individuals have "bereavement hallucinations" of their loved ones, while they are grieving their deaths.

These experiences are not very difficult to explain from a neurological point of view. Our brains store information about a large number of concepts, which we use to interpet our sensory data. If you have known a person for a long time, then your brain develops an intricate concept of them, which is associated with many other concepts. In some cases, your idea of a person may be triggered so strongly that it overrides your normal sensory interpretation. In that case, you may see or hear the person in a situation where they aren't there. Similarly, a strong religious expectation may also cause a sensory override.

Or, to take a more mundane example, just the other day I was walking along the street and I thought I saw a dog moving out of the corner of my eye. When I looked at it more closely, it was just a normal construction cone sitting there. Apparently, my "dog" neurons had been triggered by some feature of the situation, and had fired inappropriately.

In such cases reality usually re-asserts itself pretty quickly. Such neural misfires do not usually lead to a long term disassociation with reality; since as time passes, additional sense data quickly convinces the brain that its initial classification of stimuli was wrong.

Back to Jesus

So could the Resurrection appearances of Jesus in the Gospels be explained by such "sensory overrides"?

I think there are serious problems with that theory. One of the problems, obviously, is that Jesus was seen by larger groups, for example by more than a dozen men simultaneously. For a neurological misfire to affect many people at once, in the same fashion, would be a stunning coincidence. Two at once, perhaps, but never a dozen.

A second problem, is that if the accounts are at all accurate, Jesus remained present during his meetings long enough to have theological conversations involving multiple scriptural passages, and to interact with them in other ways. This would require a sustained, contagious distortion of reality, much more intense than anything needed to explain the Seeing the Rebbe website.

But I think there is an even more fundamental difference between the two types of appearances. Those who saw Rabbi Schneerson always immediately perceived it as being him. No doubt is ever expressed regarding his identification (despite the fact that many of these individuals never saw him during his life); in general the only doubt expressed is whether the experience was veridical or just a hallucination. This is exactly what we should expect in the case of a "sensory override" where the persons' concept of "the Rebbe" was being imposed on the sensory data. In such cases, the vision is immediately recognized as fitting the mental concept, because it really is just the person's mental concept triggering.

(A partial exception: in one case the rebbe looked older than the person was expecting. However, old age is a stereotypically rabbinic quality; and it is always possible that the person had seen other pictures of him, which she was not consciously remembering.)

On the other hand, those who saw Rabbi Yeshua after his death, sometimes failed to recognize immediately it was him (cf. John 20:11-18), despite the fact that they had seen him before he died. Perhaps this was because, knowing intellectually that he had died, there was a mental block in accepting that he could be alive. Or perhaps, there was something about his post-Resurrection appearance that was confusingly different from the way he had looked on earth.

Mary Magdalene thinks Jesus is the gardener at first; while Cleopas and his companion have an extended theological discussion with him, thinking he is just a mundane (but slightly clueless) stranger. Not until later in these encounters, is there an "aha!" moment and they realize they are looking at Jesus. None of this would be possible if their "Jesus" neurons had been misfiring, causing them to experience Jesus' presence even though nobody was there.

In other words, the disciples experienced Jesus' body as objectively clearly present, even though it was subjectively confusing (who and what is this person?). This is in many ways the exact opposite of a hallucination, which is subjectively experienced as some definite entity, but is confusing in its relation to objectivity (I knew it was the rebbe, but maybe it was a hallucination?).

The disciples who express doubts about the Resurrection, nearly* always do so either because they were not present during a previous appearance (e.g. the male disciples doubting the women, or Thomas doubting the other male disciples in John 20), or because they are trying to figure out how to fit the event into their belief system (e.g. thinking maybe Jesus was a ghost). Although the disciples express both doubt and wonder, we are never told of anyone who doubts because they were unable to see Jesus, even though other people in the same room did see him. All of this speaks to something objectively present.

[*The one possible exception is Matt 28:17, in which we are not told which disciples doubted or what the source of their doubt was. Since we are not told, this loose end could easily be given either a favorable or unfavorable interpretation.]

Of course, the fact that Jesus' tomb was found empty, while the other rabbi's tomb is presumably still occupied, is another difference suggesting a greater objectivity in what happened to Jesus.

Another Claimed Parallel: The Golden Plates

Some skeptics bring up as another possible parallel to the Resurrection Appearances, the invisible golden plates I briefly mentioned before, which Joseph Smith supposedly translated the Book of Mormon from.

Although Smith normally kept these "plates" hidden from sight, in order to bolster his claims he did attempt to collect a set of Twelve Witnesses (counting Smith himself) to swear to having seen the plates (presumably in an attempt to construct a deliberate parallel to the 12 Apostles. These witnesses were all taken from among his family, close friends, and financial backers.

Now let me be clear on one point: the accumulated evidence for Smith being a charlatan is so compelling that I don't think there is any way that this testimony could overcome that hurdle. The only reason I am spending words on this, is to discuss whether it is a sufficiently close parallel to the Apostolic testimony to undermine the evidence for Christianity.

One notable lack of parallelism is that, oddly, almost every member of these 11 witnesses eventually broke away from following Joseph Smith (except for two members of the Whitmer family who died a few years after signing the declaration), although one was eventually reconciled. Obviously, this vision did not have the same spiritual power to transform these witnesses into fearless leaders, the way that the Resurrection transformed the Apostles. (However, some of the witnesses continued to assert quite strongly that they had seen the plates.)

A more important point here is that the behavior of at least the first 3 witnesses makes it pretty clear that there wasn't really an object there to see in the first place. Let's look at this more closely. Wikipedia summarizes the event like this:

On Sunday, June 28, 1829, Joseph Smith, Oliver Cowdery, David Whitmer, and Martin Harris went into the woods near the home of Peter Whitmer, Sr. and prayed to receive a vision of the golden plates. After some time, Harris left the other three men, believing his presence had prevented the vision from occurring. The remaining three again knelt and said they soon saw a light in the air overhead and an angel holding the golden plates. Smith retrieved Harris, and after praying at some length with him, Harris too said he saw the vision.

In other words, we are clearly not dealing here with some object that reflects light normally, and can be seen without the use of faith. We are dealing with an object which cannot be seen at all without first engaging in intense prayer. Indeed the fact that the most skeptical individual had to leave the area in order for the other witnesses to see the plates, and that, after Harris returned, Smith had to pray with him "at some length" suggests to me that quite a bit of psychological pressure had to be applied by Smith, in order to get the witnesses to agree that they saw something.

And after his testimony, Harris admitted on multiple occasions that he had only seen the plates "with the eyes of faith" or "spiritual eyes", not his "natural eyes". (Although later in his life he tried to backtrack on this point.)

Of course, there is no doubt that Jesus also had a lot of charismatic pull over his disciples. But if Christianity were false, then Jesus would still have been dead, and thus not in a very good position to do the necessary browbeating and cajoling of reluctant witnesses. To tell a convincing story, one would have to cast some of the disciples into this role, say St. Peter and St. Mary Magdalene. But if that is what really happened, one might expect the accounts to reflect this guiding role, just as the Mormon texts reflect the role of Smith.

If the New Testament texts seemed to point towards this sort of "sort of there, sort of not" experience one might expect from a manufactured vision, then I would not consider that kind of evidence to be remotely sufficient for purposes of founding a new religion. But they don't.

Of course, it is always possible to imagine that the texts are not accurately reporting the disciples' actual historical experiences. Maybe, a skeptic might say, if we had interviewed the Twelve disciples just a few weeks after the event, we would have found lots of tell-tale signs of falsehood, only they've all been cleaned up from the accounts that ended up in the New Testament.

Well, maybe. But note that this is all speculation about evidence we don't have. The point of this blog series is to compare different religions with respect to the evidence that we do have, to see how they measure up to each other. I don't expect anything in this post to convince the sort of skeptic who has a strong commitment to Naturalism to give up their deeply held belief that all religions are false. But, I do think that a fair-minded person should agree that not all religions have the same degree and types of evidence.

(By the way, I don't consider the minor discrepancies between the Gospel accounts to be a sign of falsehood; rather this is a normal feature of witness testimony. I would consider it to be far more suspicious if discrepancies weren't there, since it would be a sign that this "cleaning up" process had happened. Indeed, I don't think that the things that I, as a 21st century person would regard as tell-tale signs of fakeness, are necessarily the same things as the things a 1st century person would notice as potential problems.)

Case Study #4: Miracles of the Buddha

This section is going to be very short, because (as discussed in previous posts in this series) none of the miracles of Gautama Buddha are sufficiently established by nonlegendary, provably early texts to be worth considering in this regard. If our historical evidence is consistent with a story being written centuries after Buddha's death, and if it reads like a folk tale with an obvious moral, then I'm going to discount it.

Of these legendary miracles, the one that some traditions identify as the greatest sign of a true Buddha is the "Twin Miracle", the earliest versions of which are discussed here. In which the Buddha simultaneously shoots fire and water out of every pore and limb of his body (while walking in the air). While this is admittedly impressive—and yes, quite inexplicable on Naturalism—let's be honest: it's also ludicrously, preposterously silly.

Fortunately for Gautama's reputation, it's not very hard to believe that it never happened.

* * *

Alternative Supernatural Explanations

So far in this installment, we've been operating under the unstated assumption that if a religion is false, then any claims it makes to supernatural power are also false. In other words, I am assuming that a Christian would try to explain e.g. a putative Muslim or pagan miracle using the same types of explanations (fakes, mistakes, legends) that a naturalistic skeptic would resort to. However, a religious worldview also opens up the possibility of supernatural explanations of what is going on with other religion. Such arguments must be considered as well.

For example, someone might try to explain miracles in other religions by saying that they actually have an origin in a non-divine supernatural power (e.g. demons, or psychic powers, or something).

As a Christian, I do believe in the existence of supernatural fallen angels. These demons are, unlike God, created beings with limited power and wisdom, who have chosen to abuse their free will by trying to resist God's kingdom. Even in the contemporary world, Christian missionaries who evangelize foreign tribes occasionally report encounters with "witch doctors" who appear to have actual supernatural powers to levitate objects or curse people—even if said manifestations subside when the Christian missionaries pray to the greater power of Jesus.

Just because a paranormal event is genuine in the sense of being caused by an actual paranormal being, does not necessarily mean that the paranormal being is not itself a liar trying to deceive people. In a Christian worldview, any such "miracles" caused by demons would be just as fraudulent as the miracles caused by human slight-of-hand. The fact that the fraud might involve some physical powers that a materialist would have trouble believing in, would not really change the spiritual reality of what is going on in such situations. (Including, perhaps, that the demon's power is quite limited, and that it relies largely on terror and suggestion to trick human beings into accepting bondage voluntarily.)

However, if a non-Christian miracle can be given an obvious natural explanations—and most of them can be—then resorting to this more complicated hypothesis is completely unnecessary. And such accounts do involve making the significant concession that something is really "going on" in the other religion. In such cases, judging between the two religions would then require consideration of some other factor (e.g. the degree of goodness or power displayed by the competing miracles).

For example, the Gospels report that after Jesus did a certain healing miracle, some of his enemies accused him of "driving out demons with the help of Beelzebul, the prince of demons" (Matthew 12:24). In other words, Jesus' religious opponents did not deny that he performed supernatural feats, but proposed that he had made some sort of Faustian bargain with the Devil. (A similar accusation is perpetuated in the Talmud, which claims that Jesus was executed for "sorcery" among other offenses.)

In his reply, Jesus pointed out that it doesn't make that much sense for the Devil to be going around undoing his own work: "A house divided against itself cannot stand" (12:25). And if a person is capable of seeing with their own eyes, a miraculously blind and mute person being healed, and not seeing it as an obvious sign of goodness, then it's hard to imagine any possible set of experiences which could convert such a person.

(An even more extreme version of "a house divided" would be if God deliberately creates such false miracles in order to fool or trick people, or to test their faith. I already rejected one such claim of divine deception in a previous post.)

Modern Supernatural Healings

But do such dramatic healings still happen today? I think they sometimes do.

I've previously mentioned St. Craig Keener's book on modern-day miracles. In addition to consulting secondary sources, he personally interviewed hundreds of individuals reporting miracles (including several individuals he knows well enough to vouch for their honesty). Keener discusses many miracles (mostly healings) some of which are extremely difficult to explain naturalistically.

He describes (with so many examples that it becomes quite tedious) many cases of instant or rapid healing of blindness, deafness, tumors, various disabilities, and even raising the dead, usually in response to prayer in the name of Jesus. In many cases the conditions clearly had organic causes (hence were not psychosomatic) and the healings were confirmed by before & after medical scans (including cases where the doctors had difficulty believing it was the same person, since the prognosis was so dire). He does not presume a supernatural explanation, but carefully considers alternative explanations. All in all, I found this book an extremely convincing refutation of Naturalism.

What Keener's book is not, is an exercise in comparative religion. Except for a brief chapter—which honestly felt like it was in the wrong book—discussing possible ancient parallels (e.g. temples to Asclepius, the god of healing), he confines his attention to Christian miracles. So far as one can tell from this book, it is at least possible that there are equally impressive healings in completely separate religions (although if so I am not aware of them).

Another possible foil might be the claims of Christian Science, which has "Christian" in its name, but is an extremely heretical interpretation of Christianity which denies the existence of evil, encourages its members to seek spiritual healing instead of getting medical treatment, and basically ignores anything in the Bible which doesn't contribute in some way to these ideas.

There are excellent reasons (one of them will be described in the next post) for not taking this movement seriously, but they might well serve as a "control group" for how often one would expect seeming healings to happen naturally by chance. (It's a delicate thing theologically though, because it is certainly not a doctrine of Christianity that God never heals anyone unless their beliefs about him are completely correct.) In any case, this topic would require doing further research on another blog post entirely, which I don't have much time for at the moment.

Genuine Non-Christian miracles?

In light of Jesus' arguments, I am unwilling to use demonic influence as an excuse to explain away any miraculous event which is both obviously good (e.g. a physical healing) and obviously real (i.e. the person was actually healed, and we aren't just talking about fakery, or coincidence, or people ignoring their cancer symptoms, or something like that).

In my worldview, any such supernatural grace (even if it were to occur in the context of a non-Christian religion) must always be attributed to the one true God. This is so even if the individual who receives the miracle has superstitious or unreasonable ideas about how the miracle came about.

Such a miracle might well provide some significant evidence for the basic validity of the religious tradition in question (at least to the extent of sometimes putting its worshippers in touch with the real God) but there is no reason I know of to think that God would be so stingy as to never do a miracle for anybody who was theologically misguided in any respect. (Though obviously, a Christian can't accept as valid any miracles that are specifically designed to authenticate a false prophet's religion.)

This is why I have no problem recognizing that there may be genuine religious miracles received by some people whose theological ideas I may not agree with in other respects (e.g. Roman Catholics, Pentecostals who subscribe to aspects of prosperity gospel thinking). And for all I know, God might also grant such miracles to some Muslims, modern Jews, pagans, etc.

As St. Keener says:

One of Hume's arguments against miracles is that incompatible religions claim miracles, and thus, on his view, their claims cancel each other....

He probably drew this argument from the deists, who in turn had used similar arguments of Protestants and Catholics polemicizing against each other's miracles. Hume advances this observation to argue that miracle claims as a whole are therefore suspect (part of a universally or at least widely tendentious religious rhetoric). But using this observed incompatibility as an objection to miracles fails to reckon with multiple potential philosophic alternatives to the objection. For example, such miracles could be understood as supreme power's "goodwill" toward people of different faiths "without necessarily endorsing" particular beliefs; the related idea that most miracles in response to prayers do not explicitly specify a particular religious system; the systems could be less incompatible than their adherents suppose; or one could argue that there are multiple supernatural or at least superhuman powers, a view held by traditional religion and even by most traditional forms of monotheism. (Miracles, p. 193, 195-196)

St. Brandon Watson, a historian of philosophy, makes a similar point:

Hume is turning popular anti-Catholic tropes and arguments, as used by Protestants, against Protestants as well. Protestant arguments about the gullibility of Catholics with regard to the miracles of the saints become Humean arguments about the gullibility of religious people generally with regard to miracles generally; Protestant arguments that we cannot rationally believe that transubstantiation occurs against the evidence of our senses find parallels in Hume's arguments against believing in religious miracles; and so forth. What is more, this seems not to have been lost on Hume's early critics; George Campbell, for instance, sees quite clearly what Hume is doing in (for instance) his long note on the Jansenist miracles, and, obviously, refuses to play the game, insisting that the parallels are artificial and based on false assumptions. In any case, these tropes were not typically in-principle arguments; they were based on claims about the mendacity of priests, the gullibility of poorly educated Catholics, and so forth.

In other words, the most famous example of a skeptical "Argument from Other Religions" is an adaptation of inter-religious Christian disputes, back when what was most often meant by a "false religion" was a rival interpretation of Christianity.

This raises the interesting question of whether this particular skeptical attack on religion would have had the same rhetorical appeal, if the Church had remained united—at least by the bonds of love, if not identical belief—rather then splintering into warring religious factions in the 16th and 17th centuries. Could it be that the "Argument from Other Religions" really inspired by the long shadow of the religious wars of the Early Modern era?

Even if that thesis is too extreme, I think it is easy for people in the Western world to make arguments from comparative religion, without realizing that they are really projecting features of Christian doctrine onto other religions, which—when understood from a sympathetic view—don't even really pretend to base themselves on the same kinds of concrete historical miracle claims that support Judaeo-Christian doctrines.

In other words, many skeptics are too lazy to actually do any research about what a totally non-Christian religion looks like. Instead of doing research, it's easier to just compare Christianity to an imagined clone of itself, and find that on the whole Christianity comes out looking rather unoriginal.

So long as the skeptic confines himself to religions that actually are explicitly attempts to copy and surpass Christianity (e.g. Islam, Mormonism...) these expectations won't be totally misleading. But it is a mistake to think that a random Eastern religion with no connection to Christianity will appeal to the same kinds of evidential support that Christianity does. Such an approach would, ironically, project Christian values onto an essentially foreign milieu, and thus fails to see what is really going on in Eastern religions.

Next: Honest Messengers