[This is an updated version of an essay published at my own website in December 2014.—Scott Church]

As Christmas draws nigh, I am reminded of the many reasons why it’s my favorite holiday. It’s the culmination of my favorite time of year—when geese and swans are on the wing through crisp morning fog, the hills are on fire with the colors of dwindling annual life cycles in their foliage, and salmon fill the rivers, returning with such undying exuberance to complete a cycle of life as old as the cascades they leap with so much primal determination. My family and I visit a tree farm in the Cascade foothills and return with our Christmas tree. We decorate it, hang lights, and fill our home with Christmas carols, sacred hymns, and the canons of the season. Autumn wreath and other spicy scents waft from candles. The joy and worshipfulness of the whole season fills gives me joy. But most of all, Christmas is the time when we remember that God chose to come down from Heaven and become one of us, sharing in the fleshly reality of our joys and sorrows, and offering His life as a loving sacrifice for ours. Unto us a Savior is born!

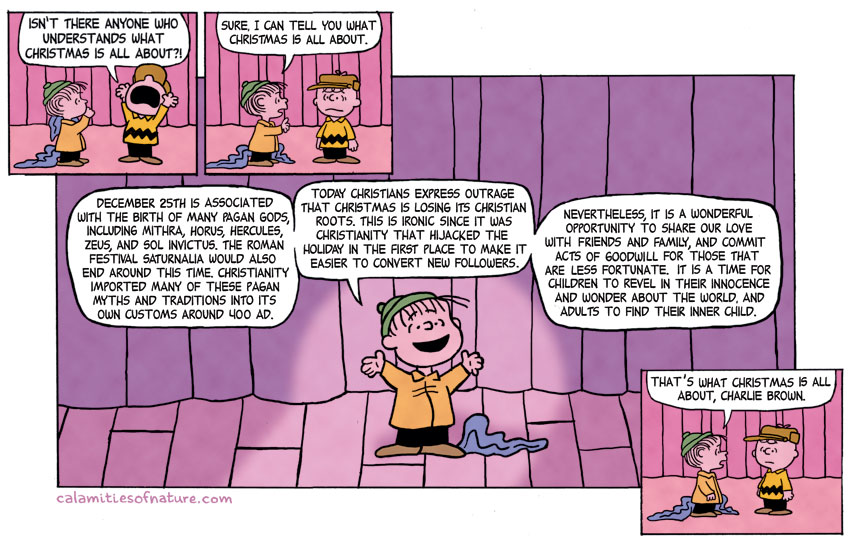

But like so many other things that bring joy and meaning to our lives, it also has a way of bringing some of the lamest ax grinders among us out of the woodwork like moths to the flame. We’ve all heard the endless pratlling of benighted fundamentalists who take offense whenever someone says, “Happy Holidays!” instead of “Merry Christmas,” as though the Christmas story celebrated by 2.2 billion people worldwide is somehow threatened by anyone who doesn’t hold Christian beliefs and prefers to find their own meaning in the season. But for all their annoying priggishness, at least these people have some semblance of history and scholarship on their side. More bothersome (to me at least) are the self-proclaimed guardians of politically correct secularism who insist that Christmas, and indeed the entire body of Christian doctrine and tradition, were somehow stolen from pagan traditions by nefarious church leaders intent on suppressing them. The Immaculate Conception and virgin birth of Jesus, the manger, the visit of the magi bearing gifts, the date of December 25 and more… all, we’re told, originated in pagan myths such as those of the gods Mithras, Sol Invictus, Horus, and other ancient cosmogonies. Even the historical figure of Jesus Himself, His twelve disciples and the crucifixion and resurrection are said to have been plagiarized. Every fall, once the Thanksgiving decorations come down and the Christmas lights start going up, it’s only a matter of time before cartoons and Facebook memes like the one below start making the rounds in anti-religion social media chat rooms.

Apart from the boorish tastelessness of vandalizing a Christmas classic, every word of this is false and no reputable scholars take any of it seriously. Even so, the rise of such fashionable mythology within anti-religion circles makes for an interesting, and at times entertaining story. Verily, verily, human nature is a gift that keeps on giving.

The actual date of Jesus’ birth is not known. The gospels tell the Nativity story from different perspectives but contain few clues as to its date, and the next two centuries contain little extra-biblical evidence to supplement them. Surprising as it may be to some, the early church did not attach much significance to the birth of Jesus, preferring instead to celebrate his ministry, death and resurrection. Some Christian writers of the period even condemned the Roman practice of celebrating birth anniversaries as “pagan” practices (Origen), rendering it highly unlikely that they or the church would’ve been in the habit of celebrating the Nativity. Toward the end of the 2nd Century an interest in dating the birth of Jesus emerged in the Coptic Church of Northern Africa, and by 200 C.E. several dates were being proposed (Clement). During the 2nd Century some Christian writers saw intimations of Jesus in the vernal equinox and placed the Annunciation and the passion of Christ on or near the 14th day of Nisan (March 25 in the Julian calendar). Irenaeus (c. 130–202) made this claim and linked it to the crucifixion as well, as did Tertullian of Carthage (Tertullian), Hippolytus and the pseudo-Cyprianic (Talley, 1986). In 243 C.E. an anonymous work titled De Pascha Computus suggested that the creation of the sun and the Annunciation both occurred on or near the vernal equinox as well (McGowan, 2002).

The notion that the Annunciation and passion of Christ, as well as creation should fall on the vernal equinox was widespread by the mid-3rd Century, and by the middle of the 4th Century celebrations of Christmas had converged on two dates: December 25 in the West and January 6 in the East. Valentinus' Chronography of 354 refers to a Christian liturgical feast denoted as "Natus Christus in Betleem Judeae: Christ was born in Bethlehem of Judea." By this time the Donatists of Northern Africa were also honoring the December 25 date and appeared to have been doing so since their inception as a church under the persecution of Diocletian in 312 C.E. (McGowan, 2002). In the East, where the birth of Christ had been tied more strongly to the Epiphany, Christmas was celebrated on January 6. The period between the two dates came to be known as the Twelve Days of Christmas. By 388 C.E. the December 25 date had been imported into the Eastern Church as well by John Chrystosom who gave a sermon claiming, “Our Lord, too, is born in the month of December ... the eight before the calends of January [25 December] ..., But they call it the 'Birthday of the Unconquered'. Who indeed is so unconquered as Our Lord ...? Or, if they say that it is the birthday of the Sun, He is the Sun of Justice…" (Martindale, 1908; Roy, 2005; Wainwright and Tucker, 2006).

So, by the mid-3rd Century Christian writers had based the conception of Jesus on the vernal equinox leading to a birth date of December 25 (Duchesne, 1919; Alexander, 1994; Roll, 1995; Talley, 1996; Wybrew, 1997; McGowan, 2002; Roy, 2005; Senn 2006, 2012; Rothenberg, 2011). By the middle of the 4th Century, liturgical feasts had been marking the date for some time and had almost certainly been doing so before the ascension of Constantine to the Eastern and Western thrones in 312 C.E.

It’s important to note that prior to Constantine Christians were a persecuted minority. Official state sanctions against Christians were desultory throughout the 2nd Century and escalated to Diocletian great persecution from 303 to 311 C.E. during which as many as 20,000 Christians were executed for not bowing down before the officially recognized gods of Rome. They were hardly in a position to “usurp” any pagan festivals and in fact, for reasons of religion and physical safety they were actively trying to distance themselves from them. Prior to the 4th Century Christian writings make no references to altering, or otherwise laying claim to any pagan holidays or dates (McGowan, 2002). It was during this period (274 C.E.) that Aurelian declared Sol Invictus (“Unconquered Sun”) the official sun god of Rome and officially established the festival Dies Natalis Solis Invicti on December 25 to commemorate him. Sun god worship was present in Rome in one form or another since before the 1st Century. But whereas Christian writers had established arguments for the birth of Jesus on this date by 200 C.E., there is little evidence to suggest that feast days commemorating Sol Invictus were celebrated prior to the mid-4th Century (Wikipedia, 2017). In fact, evidence suggests that Natalis Invicti may have been a response to December 25 Christian liturgical feasts rather than a motivator for them (Tighe, 2003). It wasn’t until Constantine issued the Edict of Milan in 313 C.E. that persecution of Christianity ended (only to be renewed to some degree by Julian the Apostate from 361 to 363 C.E.), and Christianity didn’t become the state religion of Rome acquiring the power “usurp” any pagan practices until 380 C.E. under the reign of Theodosius I (Wikipedia, 2017b).

But according to our cartoon historian… a minority of Christians launched a sinister plot to steal the festival of Natalis Invicti from the pagans who were persecuting them 50 to 70 years before it was even practiced, and nearly two centuries before the church had any sanctioned power to do so.

What about Saturnalia? It originated as a festival for farmers in honor of Saturn (from satus for "sowing") that marked the end of the autumn planting, and was practiced in one form or another from as early as 217 B.C. until well into the 5th Century C.E. Originally a two day affair beginning around December 17, it eventually became a week-long festival culminating on December 23 (Salusbury, 2009; Wikipedia, 2017c). Though it has been suggested that the festival may have been extended to December 25 by Domitian (AD 51-96) during his reign as an assertion of authority (Salusbury, 2009), for the bulk of its C.E. history it was a 5-7 day festival that culminated with the Sigillaria (day of gift giving) on December 23. Its timing does not align well with December 25 or January 6 dates for Christmas, and it's very unlikely to have had any influence on the church's adoption of either date (Gwynn, 2011).

But if Sol Invictus and Saturnalia are questionable Christmas story candidates, the cult of Mithras is downright ludicrous. Mithras was a Roman reinvention of the ancient Indo-Iranian angelic deity Mithra (Sanskrit, Mitra), the guardian of covenant and oath, harvest, cattle, and water. He was the all-seeing protector of truth, and the divinity of contracts and judicial process (Wikipedia, 2017c). He is first mentioned in the Rig Veda circa 1400 B.C. after which his worship spread to the Persian world through Zoroastrianism where he was known as Mithra. It’s unclear whether Zoroaster himself embraced Mithra, but he appears throughout the Zoroastrian Avesta (particularly the Khorda Avesta, or Book of Common Prayer) possibly as early as 559 B.C. He entered the Hellenic world as Mithras when Alexander the Great conquered Persia in the late 4th Century B.C. Roman Mithraism first appears in the historical record late in the 1st Century C.E. and flourished throughout the empire, particularly among the military, until the 4th Century. Unlike other pagan religions of the period, Mithraism was a mystery religion whose doctrines, rituals and festivities were closely guarded secrets. No scriptures, writings or first-hand worship accounts are known to exist apart from a handful of catechisms and one 4th Century liturgy. Everything that is known about it has been derived from inscriptions at archaeological sites and second-hand commentary about it in the writings of contemporary outsiders (Clauss, 2001; Pearse, 2012; Pearse, 2012; Wikipedia, 2017e). There is general scholarly agreement that although he was derived from the Zoroastrian tradition, the Roman Mithras was noticeably dissimilar to his Persian counterpart and today he is regarded as a distinct product of the Roman Imperial religious world (Wikipedia, 2017c, 2017d, 2017e; Encyclopedia Britannica, 2017). It’s important to note that syncretism was a common feature of Roman paganism and Mithraism was no exception. Most archaeological finds associated with the worship of Mithras contain statues dedicated to other gods and inscriptions dedicated to Mithras were commonplace in other cult sanctuaries. Roman Mithraism was more a way of practicing pagan worship than a religion in its own right and Mithras' worshippers were often found worshipping other gods in the civic religion. Mithraism was far more likely to be influenced by other religions rather than an influence on any of them (Burkert, 1987; Clauss, 2001; Pearse, 2012).

Nevertheless, attempts have been made to explain Christianity away as a plagiarism of Roman Mithraism. The idea that the two might be related was first suggested during the 19th Century by Renan (1882) based on a criticism of Mithraic rituals by Justin Martyr (155-157 C.E.). This in turn led to decades of speculation culminating in numerous alleged similarities between Mithras and Jesus, including (but not restricted to) that he was born of a virgin on December 25, crucified and resurrected after 3 days, marked with the sign of a cross, and attended by 12 disciples. Apart from superficial similarities no real evidence exists for any of these claims and few if any scholars take them seriously. They survive mostly as urban legends circulated by New Age and/or New Atheist communities (Pearse, 2012, 2014; Wikipedia, 2017f). In fact, given that Christianity predates Roman Mithraism by nearly half a century, what few similarities there are, appear to be the result of Mithraism borrowing from Christianity rather than the other way around (Nash, 1984; Pearse, 2012). Of the current myths regarding Mithras and Christianity, the ones most relevant to Christmas are that he was born on December 25, and that he was a virgin birth.

The December 25 date is based entirely on conflations of Mithras with Sol Invictus. The sun (Sol) figures prominently in Mithraic tales like The Banquet of the Sun, and he was often referred to descriptively as sol invictus (the unconquered sun), but never by formal title. Sol Invictus and Mithras were separate deities. The title “Invictus” was given to a number of pagan deities (not unlike “Reverend”) and wasn’t reserved for Sol Invictus alone. In fact, Sol Invictus and Mithras are shown together in a number of scenes as separate deities (including The Banquet of the Sun). Some feature Mithras ascending behind Sol in the latter's chariot, the deities shaking hands, and sharing pieces of meat at the altar on a spit or spits. One even shows Sol Invictus kneeling before Mithras (Clauss, 2001; Beck, 2004). No other mention of December 25 relating to Mithras occurs anywhere in the ancient record, and there is no evidence to suggest that the state sanctioned Roman festival of Sol Invictus was related to Mithras in any way.

Attempts to ascribe a virgin birth to Mithras are downright bizarre. The historical record contains two accounts of his birth: The Roman version, and the Indo-Iranian version that preceded it. In the former Mithras is depicted as emerging fully grown from a rock in a cave bearing a torch or dagger and wearing a Phrygian cap after which his first act was the slaying of a bull (Clauss, 2001). Some accounts associate the rock of his birth to the water god Oceanus and it serves as a fountain. The Indo-Iranian myths are similar with a few variations. Here Mithra is born of a rock by the shore of Araxes (Widengren, 1966). Some have claimed that the Vedic tradition depicts Mitra as being born to the virgin goddess Anahita, but this is difficult to defend as that tradition portrays Mitra as her consort rather than her son (Lindemans, 1997). In any event, this aspect of the Vedic tradition appears to have had little or no impact on the Zoroastrian Mithra or the Roman Mithras.

Perhaps I’m missing something, but if there’s any similarity here to the virgin birth of Jesus or any other Christian doctrine I’m not seeing it. I doubt many virgins would take kindly to being equated with wet rocks or consorts.

Finally, we come to my personal favorite—Horus.

Horus, who was one of the oldest and most significant gods of the Egyptian pantheon, was worshipped from the late Predynastic period to the Greco-Roman era. The earliest records portray him as the patron deity of Nekhen, the first known national god of Upper Egypt. Most commonly he was portrayed as a falcon and the son of Isis and Osiris, but in some traditions Hathor, goddess of joy, feminine love, and motherhood is his mother or wife. Horus fulfilled numerous functions. Most notably he was the god of the sky, sun, war and protection. In some records he is described as containing the sun and moon as his right eye and left eyes which traversed the sky when he flew across it (Wikipedia, 2017h). Among the festivals of ancient Egypt Horus figures most prominently in Heb-Sed which honored his father, the god Osiris, in a series of rites that celebrated him as dead, dismembered, and reconstituted. There he is celebrated as Osiris’ son, alter-ego and eternal avenger. Heb-Sed culminated on the last day of Khoiak with a ceremony in which four arrows were shot in four directions to ward off of evil powers and acknowledge the rule of Pharoah and the role of Horus in his father’s battles (Roy, 2005). In addition to Heb-Sed Plutarch reports that the birth of Elder Horus (one of many variations of the Horus myth) was observed on the second epagomenal day of the Egyptian calendar (Plutarch, 1936).

The birth of Horus is recounted in the myth Isis and Osiris. In most versions of the myth he is born to the goddess Isis after she retrieves the dismembered body parts of her murdered husband Osiris except his penis which was thrown into the Nile and eaten, depending on the account by a catfish or a crab. Plutarch reports that when Isis was unable to retrieve Osiris’ penis she used her magic to fashion one from gold and impregnated herself with it. Some versions portray Isis either as reviving Osiris enough to have an erection via the refashioned penis, or reviving the penis itself (NYFS, 1973; Lesko, 1999; Scholtz, 2001; Shaw, 2003).

The Egyptian calendar was primarily lunar and varied in both time and population sector across the Early, Middle and Late Kingdoms. Often it being driven more by seasonal cycles (e.g. flooding of the Nile) than explicit astronomical events. The five epagomenal days were included to account for solar/lunar calendar creep (Wikipedia, 2017g; Meyboom, 1995). The Coptic calendar introduced by Ptolemy III in 238 BC was based on it with the primary difference being addition of a 6th epagomenal day. Depending on Kingdom period Khoiak roughly overlaps September and October, or November to January in the Gregorian calendar. In the Coptic calendar it runs from the Gregorian calendar period of December 10 to January 8 which translates to November 27 to December 26 in the Julian calendar (Wikipedia, 2017g). The second epagomenal day of the Egyptian calendar corresponds to an astronomical date of July 31. No historical or archaeological record of any kind directly or indirectly ties the birth of Horus to December 25.

So... Never mind alleged plots to steal Christmas Day from a Roman sun god’s holiday decades before it even existed, or “virgin” birth stories based on another god’s emergence from a wet rock, let me get this straight…

Osiris is murdered and dismembered, his johnson is whacked off, tossed into the Nile River, and promptly eaten by crabs…

But not to be deterred, his nubile young bride fashions for herself a magical golden dildo, screws herself silly with it, has a cigarette afterward, and spits out sweet, cherub-faced Horus…

And this, we’re told, is where Christians get their story of the *cough* virgin *cough* birth of Jesus of Nazareth to a first century Hebrew peasant girl.

On my best day, I couldn’t write material this good if I tried! :D

What any of these epic tales have to do with Christmas, Jesus of Nazareth (an historical figure), or Christianity remains to be seen (Wikipedia, 2017b; Nash, 1994). But that hasn’t kept legions of secular conspiracy theorists from inventing ways to connect them, which raises the question of why such ideas have so much cache today. Clearly, scholarship isn’t involved, so what is? Having followed this sort of thing for some time, I believe there are at least three factors fueling its popularity.

First, there’s the general public’s fascination with pseudoscientific and/or controversial ideas, and the fact that there’s no shortage of people with an ax to grind against traditional Christianity (unfortunately, not always without cause). To those with anti-religion agendas, speculations of Christian plagiarism are a bloody 10-pound pot roast in a shark tank. Given their well-known fascination with genetic fallacies, New Atheists are particularly vulnerable to this sort of thing. Genetic fallacies assert that if the origin of some idea or belief can be accounted for it is thereby explained away, which is of course, false. The truth or falsehood of a belief has nothing whatsoever to do with how it was acquired (evolution has equipped us with binocular vision for instance, which gives us depth perception and the ability to ascertain curvature, but it doesn’t follow that the earth is flat or that space isn’t three-dimensional). Yet numerous popular books like Richard Dawkins’ The God Delusion are based almost entirely on the belief that if the origin of religious doctrines can be accounted for historically or psychologically they are thereby falsified. Christian conspiracies are bound to play a significant role in such works whether there’s any evidence for them or not.

Second, it is true that there are some superficial similarities between Christianity and ancient paganism. Dates sacred to both traditions do tend to be grouped together for instance, and on occasion, they even overlap. But the real reason is more pedestrian than any conspiracy. To the ancients, the sun was an obvious object of reverence, and thus, an obvious choice for a god. To Christians, it was an equally obvious symbol of God’s bounty and life-giving provision, and its seasonal cycles were given the utmost significance. Equinoxes were associated with planting and harvest, burgeoning life and death, and as the shortest day of the year, it was natural to equate the winter solstice with the birth of the sun and the coming year. So, it’s little wonder that pagan festivals would cluster around these astronomical dates. And as we saw earlier, for reasons that had nothing whatsoever to do with any pagan tradition Christians came to associate the vernal equinox with the Immaculate Conception and the passion of Christ, thereby placing his birth on or near the winter solstice as well.

There is a well-known logical fallacy referred to as cum hoc ergo propter hoc (Latin: "With this, therefore because of this") which states that correlation implies causation. B correlates with A, therefore A caused B. This is also false. Two or more events might correlate by coincidence—accidents do happen after all, or they both might be separate consequences of something else. Events cannot be causally connected until these possibilities have been ruled out. In this case, they haven’t. The fortuitous alignment of Christian and pagan sacramental holidays is a natural consequence of the fact that the earth has seasons because its rotational axis isn’t perpendicular to its orbital ecliptic plane… in other words, astrophysics. No sinister, politically incorrect, anti-pagan conspiracies or cover-ups are involved.

It is true that after the 4th Century Christians incorporated many pagan traditions into Christmas celebrations and continue to do so to this day. My family and I put up Christmas lights and exchange presents, both practices inherited from Saturnalia. We also put up a Christmas tree, a custom which may have been borrowed from pre-Christian pagan traditions although this is speculative at best (Wikipedia, 2017i). I have many atheist and agnostic friends who do so as well. Does this mean we all believe in Mithras or Sol Invictus, or that we're plotting to suppress pagan ideas or steal their traditions? Of course not. We incorporate them because we find them beautiful and meaningful to us personally. We have no desire to inhibit anyone else’s worship, only to practice our own with whatever symbols and ceremonies speak to our hearts. Apart from prejudice, there's no reason to believe the early church as a whole was any different.

But to date, arguably the biggest factor in the spread of these ideas was a year 2007 pseudo-documentary called Zeitgeist, the first of a three-part series that eventually led to an international movement of the same name. The Zeitgeist series promoted a number of conspiracy theories not the least of which were that,

- The 9/11 attacks were orchestrated by “New World Order” forces and the World Trade Center was deliberately brought down by a controlled demolition.

- A global cabal of bankers has been manipulating world events

- The Federal Reserve was behind the sinking of the Lusitania, Pearl Harbor, the Gulf of Tonkin incident, and several wars including the Vietnam War.

- All humans will be implanted with RFID chips to monitor behavior and dissent.

All of which and more, we’re told, is part of a global plot to set up a religiously motivated “New World Order” (Wikipedia, 2017j).

The religiously motivated part is key to the movie’s claims. Zeitgeist is based on the so-called “Christ Myth” theory, an idea that originated during the 19th Century and has since assumed many forms most of which have been shaped more by intellectual and cultural fashion than anything concrete. According to the Christ Myth Jesus of Nazareth either never existed or had nothing to do with the origin of Christianity if He did, and Christianity was derived entirely from various pagan myths. Early in its history it had at least some scholarly support (particularly in the years prior to WWII when archaeology and text criticism were still in their infancy) but advances in these and other fields have relentlessly eroded what little support it originally had (Wikipedia, 2017k). Today few scholars take it seriously and it is confined almost exclusively to New Age conspiracy theorists and anti-religion activists like Richard Dawkins and the late Christopher Hitchens. In its most extreme forms, the Christ Myth go so far as to claim that Christianity was intentionally crafted by secretive religious cabals intent on gaining global power by eradicating pagan traditions. This is the starting point for the movie’s claims. The Christ Myth is at the root of nearly every claim made in Zeitgeist and the movie and its sources have become something of a one-stop-shopping kiosk for its defense. Skeptic Magazine described Zeitgeist as “The Da Vinci Code on steroids” (Callahan, 2009) and in fact, much of the movie’s content is strikingly similar to that series. A review of its sources (Joseph, 2007) yields little more than armchair archaeology, occult works (including one on “astrotheology and shamanism”), conspiracy theories and New Atheist agitprop. At best no more than 2 or 3 could be considered even remotely scholarly, and the most recent of these is nearly 60 years old.

But the real heavy lifting comes from the works of one Dorothy M. Murdoch, who publicly goes by the name “Acharya S” (Bertlet 2011; Winston, 2007; Callahan, 2009). Acharya is a Hindu term for a Brahmin teacher or guru, and as near as I can tell, the “S” doesn’t stand for anything. Murdoch, whose personal website is called “Truth Be Known,” was Zeitgeist’s primary consultant. Now I can’t speak for anyone else, but where I come from, a website named “Truth Be Known” run by someone who goes by the moniker “Guru [Capital Letter]” has wingnut written all over it. So, I decided to have a look at Ms. Murdoch’s credentials, and surprise, surprise… she has none. The Bio and Credentials pages at Truth Be known go to excessive lengths to convince us that she really does have some relevant expertise. There, she informs us that,

“While I myself am 'self-taught' in the sense that I developed a fascination for learning certain subjects at an early age, unlike the bulk of my detractors I actually do have formal, academic credentials relevant to my field of expertise.” (Truth Be Known, 2017)

What are these “formal, academic credentials,” you ask…?

- "Schools in a small town known for its emphasis on academic excellence" including a 2nd Grade "experimental" program.

- Growing up on a “small farm” with “loads of animals” and “fields and woods all around” where she learned “the nature-worshipping roots of many religious concepts.”

- Serving as trench master on a few “archaeological excavations” in Corinth, Greece, and Connecticut (!).

- Expertise in “esoterica” and other “mystical studies.”

Etc. etc. Naturally, details of the archaeological digs are carefully omitted, as are arguments for their alleged relevance to the origins of any Abrahamic religion, including Christianity (why she thinks a dig in Connecticut would have anything to do with either is anyone’s guess). Murdoch claims to have been “classically educated at some of the finest schools…” but the only verifiable education she has beyond high school is a BA in Classics from a small Pennsylvania college that she extols as one of America’s most august “potted Ivy League” institutions (which no one I’ve encountered has ever heard of). Murdoch also makes much of her alleged “membership” in the American School of Classical Studies at Athens. But a check of that institution’s website reveals no mention of her among its faculty or alumni. Callahan (2009) even contacted many people affiliated with the school, past and present, and was told that neither they, nor anyone they knew had ever heard of her. And Lord only knows what passes for “esoterica” and “mystical studies” (although I suspect some hallucinogens and a bottle of Night Train Express might render them more accessible).

The bottom line…? Murdoch is a New Age crank who has no formal education or professional experience in any field relevant to the topics she writes about. When one must devote multiple website pages to convincing others of their qualifications, even to the point of extolling their 2nd Grade education, it’s because those qualifications don’t speak for themselves. She, of course, defends this…

“The ‘credential argument’ frequently constitutes an ad hominem attack, especially in the case of individuals who disagree with mainstream perspectives. In reality, it is not always necessary to have perfect and proper credentials to become an expert or authority in a subject, or even to understand it.” (Truth Be Known, 2017)

True enough. But while none of the above specifically refutes any of her claims per se, in the very least, it calls her objectivity and competence into question—particularly since by her own admission her views are outside of “mainstream perspectives” (i.e. credible peer-reviewed scholarship). Reasonable people who are as lacking in qualifications as she is would be the first to admit that and would approach subjects like this with at least some humility. They would make every effort to ground their investigations in broadly-based extant research and solicit professional feedback whenever possible before running with any conclusions they reach.

There’s a term for people who are certain of their beliefs, and see themselves as visionaries persecuted by mainstream academia… they’re called crackpots.1

Which brings us to her seminal work, The Christ Conspiracy (Acharya, 1999), which is the primary source for Zeitgeist’s Christ Myth claims. Most of the movie’s other sources were taken from there as well, and as of January 26, 2008 many of these also cited it in return (Callahan, 2009). This is hardly surprising. Incestuous scholarship is rampant in Christ Myth circles. The same handful of conspiracy theorists and cranks routinely cite each other in circles, seldom venturing into peer-reviewed research. On the rare occasions that they do, they invariably cite it out of context. Murdoch even goes so far as to cite herself as an “independent” source for her claims. She is known for citing “D.M. Murdoch” as a source while publishing under her Brahmin guru name, and vice versa. As of this writing many of Zeitgeist’s original sources appear to have been removed from the Companion Guide, most likely because Murdoch and the movie’s producers have been covering their incestuous and/or discredited tracks. In what follows I will restrict myself to general comments about the book. First, because the content in it that is most relevant to the topic at hand, Christmas, has already been addressed. And second, because frankly, the content that isn’t erroneous is negligible and a reasonably complete catalog of its countless blunders would take up volumes.

Beyond a doubt, The Christ Conspiracy is one of the most amateurish and incompetently researched works I’ve ever seen. From start to finish it ricochets between hysterical anti-religion diatribes and arguments that range from questionable to schizophrenic. Every page contains numerous errors that even 10 minutes’ worth of fact-checking would have corrected. To wit;

- Murdoch claims the 12 disciples of Jesus were taken from the 12 signs of the zodiac. The basis for this appears to be a carving showing Mithras surrounded by the 12 signs of the zodiac, which Murdoch arbitrarily labels “disciples.” Similar claims are made about Horus in spite of the well-established fact that he is mythically portrayed as having four semi-divine disciples called "heru-shemsu,” or “followers of Horus” (Traunecker, 2001). Seattle Seahawks fans refer to themselves as the “12th Man.” If this sort of reasoning and carelessness with words like “disciple” were taken at face value, then football teams and their fans are borrowing from the zodiac as well.

- She quotes Acts 11:26 as saying that the first Christians were found in Antioch, but claims there was no extant Gospel there until 200 C.E. A simple reading of the text reveals that the disciples of Jesus were first called Christians there. Prior to that they were known as “disciples.” In virtually every modern Biblical translation even a casual inspection of the passage makes this obvious, yet somehow it eludes Murdoch. There is almost unanimous scholarly consensus that all four written Gospels were in circulation prior to the 2nd Century and their content had been passed by oral tradition long before that. In fact, the evidence suggests that the Gospel of Matthew was written in Antioch between 50 and 70 C.E. (Harris, 2010; Brown, 1994; and many others).

- Murdoch repeatedly associates the “Son” of God with the Sun of God arguing that “son” and “sun” are the same word. Apparently, no one told her that the modern English language didn’t exist prior to the 16th Century, which makes conflating the two during the First Century a really neat trick. The Hebrew, Greek, and ancient Egyptian equivalents aren’t even remotely similar to each other either. One would think this should be obvious to someone with a BA in Classics from a “potted Ivy League” college. Apparently not.

And so on, and so on…

The book is riddled with errors like these. One struggles to find even five or six consecutive sentences that don’t contain at least one blunder that any attentive investigator would have caught. At times Murdoch’s assertions are downright bizarre. At one point we’re told that,

“To deflect the horrible guilt off the shoulders of their own faith, religionists have pointed to supposedly secular ideologies such as Communism and Nazism as oppressors and murderers of the people. However, few realize or acknowledge that the originators of Communism were Jewish (Marx, Lenin, Hess, Trotsky) and that the most overtly violent leaders of both bloody movements were Roman Catholic (Hitler, Mussolini, Franco) or Eastern Orthodox Catholic (Stalin), despotic and intolerant ideologies that breed fascistic dictators. In other words, these movements were not 'atheistic,' as religionists maintain.” (Achayra, 1999)

Never mind whether “deflecting guilt” is the only reason “religionists” (or anyone else) might oppose gas chambers and gulags. Apparently, being Jewish by race makes one Jewish by religion as well... even if said “Jew” has the most vehemently atheistic worldview imaginable. Murdoch doesn’t like Jews very much, and rarely misses an opportunity to castigate them—a fact which works very nicely with Zeitgeist’s anti-Semitic conspiracy theories. She also seems to think that being born into a religious family makes one religious as well. Mussolini, for instance, was a well-known atheist, and Hitler, who considered Christianity to be “nonsense founded on lies,” spoke positively of it only when doing so was necessary as propaganda (Wikipedia, 2017m; 2017n). Yet somehow, to Murdoch both pass for “Roman Catholic.” Richard Dawkins was born in Kenya to Anglican parents and was a Christian until halfway through his teenage years (Hattenstone, 2003). By her logic, that makes him a Christian. I wonder if he would agree with that assessment.

Like most works of its kind, The Christ Conspiracy is heavily sourced to like-minded lay writers publishing outside of the scientific peer-reviewed process, and what little is not is invariably out of context. But most of the book’s content regarding Egyptology and religious development in the ancient world can be traced to two 19th Century authors, Gerald Massey and Helena Blavatsky. Massey was a poet and spiritualist who also pursued Egyptology as a hobby (hence all of Murdoch’s nonsense about the god Horus). He had no formal education of any kind. Blavatsky was a spiritualist and occultist best known for founding the Theosophical Society. Broadly speaking, Theosophy (as taught by Blavatsky and the Theosophical Society) is founded on a doctrine referred to as The Intelligent Evolution of All Existence occurring on a “cosmic” scale involving the "physical and non-physical aspects of the known and unknown Universe." Blavatsky believed the human race is part of the great “cosmic evolution” passing through a series of “Root Races,” the current being the Aryan, or Fifth Root Race. These Root Races are not ethnicities, but “evolutionary stages” of human development. The Fourth Root Race was in Atlantis, and the Sixth and final Root Race will be the “Spiritual” Root Race (Wikipedia, 2017o; 2017p). Blavatsky denied that Theosophy was a religion, preferring instead to call it “divine science” (as though study of the Divine isn’t religious in any way… like most occult thinkers, Blavatsky’s terminology and concepts tend to be muddled). She is considered by many to be the founder of the modern New Age movement.

And there you have it folks. The Christ Myth theory touted far and wide as a “scientific” investigation of the origin of Christianity ultimately boils down to…

The Da Vinci Code.

“Astrotheology,” pseudo-archaeology, Atlantis, anti-Semitic conspiracy theories… This is what our cartoon historian and other like-minded ambassadors for “reason” are offering as a rational alternative to Christianity and the traditional Christmas story.

Interestingly, the only professional affiliation of Ms. Murdoch’s that actually does check out is a 2005-2006 fellowship at the Council for Secular Humanism's Committee for the Scientific Examination of Religion (Wikipedia, 2017q). Apparently, in secular humanist circles “astrotheology,” “esoterica,” and Jewish bankers plotting to take over the world and microchip us all passes for “science.”

We pay a steep price when we allow fashionable “just so” stories to take precedence over properly researched facts. Not only do we make fools of ourselves, we miss out on the richness of a deeper understanding of the world and the best that is in us… the best in our souls. In the authentic version of the Peanuts cartoon above Linus quotes Luke;

And there were in the same country shepherds abiding in the field, keeping watch over their flock by night. And lo, the angel of the Lord came upon them, and the glory of the Lord shone round about them, and they were sore afraid. And the angel said unto them, “Fear not, for behold, I bring you good tidings of great joy, which shall be to all people. For unto you is born this day in the City of David a Savior, who is Christ the Lord. And this shall be a sign unto you: Ye shall find the Babe wrapped in swaddling clothes, lying in a manger.” And suddenly there was with the angel a multitude of the heavenly host praising God and saying, “Glory to God in the highest, and on earth peace, good will toward men!” (Luke 2:8-14)

Unto us a Savior is born.

The word gospel comes from the Old English god-spell derived from the Greek εὐαγγέλιον which means good news. Good news indeed! God was not content just to gaze down upon us with pity from a safe and distant Heaven. He chose to be born into our world… to become one of us, see the world through mortal eyes, mingle His tears with ours, and die on our behalf. The writer of Hebrews compares Jesus to the Old Testament high priest Melchizedek, and goes on to say,

"For we do not have a high priest who is unable to empathize with our weaknesses, but we have one who has been tempted in every way, just as we are—yet he did not sin. Let us then approach God’s throne of grace with confidence, so that we may receive mercy and find grace to help us in our time of need." (Heb. 4:15-16)

On December 25, before my morning coffee… before watching my daughter tear into her presents under our Saturnalia tree and lights… I kneel before God and thank Him for entering the world. I thank Him for entering it as a peasant, not a king… I thank Him for suffering every temptation and hardship I do, so that He may walk beside me truly knowing what it’s like to be me in this veil of tears called life…

Most of all, I thank Him for laying down His own life to guarantee me a way through it, even though I do not, and never have deserved one. To me, and 2.2 billion Christians around the world, this is the true meaning of Christmas!

Many people do not share my Christian faith—in fact, most of humanity doesn’t. Some have sought God along other paths. Others are still searching for Him as best they can. Some have come to the honest conclusion that He simply doesn’t exist because so far, they’ve been unable to find evidence that speaks clearly enough to their listening ears. What all these folks have in common are open eyes, open hearts, and open hands. They are ready to receive a gift, and to whatever extent they’re able they will find their own meaning in the Christmas season and celebrate it with thanks. But many others mark the season with clenched fists. They have axes to grind—with God, with religion, with the church, perhaps with the very spirit of the holiday itself—and are more interested in defending personal ideological turf than receiving gifts. I imagine many of these folks enjoy the Christmas season with family and friends, and perhaps take something away from it despite that. But it’s sad to see people miss out on the deepest meaning of Christmas and God’s blessings for them, simply because they refuse to let go of ideas that wouldn’t survive even 30 seconds of due diligence.

I wish for everyone God’s richest Christmas blessings. Whatever our beliefs may be, and however we choose to celebrate it, may we do so in spirit and in truth… with open minds, and open hands rather than clenched fists.

Footnotes

1) Incidentally, Murdoch’s critics aren’t restricted to the religious. Case in point, New Testament scholar Bart Erhman, whose work on textual criticism and the historical Jesus has led to much academic controversy in its own right (a topic for a separate essay). Ehrman, who describes himself as “an agnostic leaning toward atheism,” is hardly a friend of traditional Christianity. But although he disputes the picture of the historical Jesus portrayed in the Gospels, regarding The Christ Conspiracy he says, "all of Acharya's major points are in fact wrong..." and that the book "is filled with so many factual errors and outlandish assertions that it is hard to believe the author is serious…" He goes on to say that, "Mythicists of this ilk should not be surprised that their views are not taken seriously by real scholars, mentioned by experts in the field, or even read by them" (Ehrman & Dixon, 2012).

References

Acharya, S. (1999). The Christ Conspiracy: the greatest story ever sold. Adventures Unlimited Press.

Alexander, J. N. (1994). Waiting for the Coming: The Liturgical Meaning of Advent, Christmas, Epiphany. Pastoral Press. ISBN-10: 1569290113; ISBN-13: 978-1569290118. Available online at http://www.amazon.com/Waiting-Coming-Liturgical-Christmas-Epiphany/dp/1569290113/ref=sr_1_1?ie=UTF8&qid=1418062289&sr=8-1&keywords=Waiting+for+the+Coming%3A+The+Liturgical+Meaning+of+Advent%2C+Christmas%2C+Epiphany. Accessed December 6, 2017.

Anderson, M. A. (2008). Symbols of Saints: Theology, Ritual, and Kinship in Music for John the Baptist and St. Anne (1175-1563). ProQuest 2008 ISBN 978-0-54956551-2), pp. 42–46.

Beck, R. (2004). "In the Place of the Lion: Mithras in the Tauroctony" in Beck on Mithraism: Collected Works with New Essays. Ashgate Pub Ltd. ISBN-10: 0754640817; ISBN-13: 978-0754640813. Pgs. 286-287. Available online at http://www.amazon.com/Beck-Mithraism-Collected-Contemporary-Thinkers/dp/0754640817/ref=sr_1_1?ie=UTF8&qid=1418595723&sr=8-1&keywords=Beck+on+Mithraism. Accessed December 6, 2017.

Bertlet, C. (2011)."Loughner, 'Zeitgeist - The Movie,' and Right-Wing Antisemitic Conspiracism". Talk To Action Online, Jan. 14, 2011. Available online at http://www.talk2action.org/story/2011/1/14/92946/9451. Accessed December 6, 2017.

Brown, R. E. 1994. The Death of the Messiah: From Gethsemane to the Grave: A Commentary on the Passion Narratives in the Four Gospels. 2 vols. New York: BantamDoubleday. ISBN-10: 0385471777; ISBN-13: 978-0385471770. Online at www.amazon.com/Death-Messiah-Gethsemane-Grave-Boxed/dp/0385471777/ref=pd_sbs_14_1?_encoding=UTF8&pd_rd_i=0385471777&pd_rd_r=2S19CWH871CMRSCZP5NV&pd_rd_w=NfN1F&pd_rd_wg=DJ0Ik&psc=1&refRID=2S19CWH871CMRSCZP5NV. Accessed Dec. 9, 2017.

Burkert, W. (1987). Ancient mystery cults (Vol. 1). Harvard University Press. ISBN 0-674-03387-6. Available online at http://books.google.com/books?hl=en&lr=&id=qCvlvqCXF8UC&oi=fnd&pg=PA1&dq=%22Ancient+Mystery+Cults%22&ots=PTpb7HM7Ka&sig=YtiznCG5tMc6zgDQ_3PaLb9CuvA#v=onepage&q=%22Ancient%20Mystery%20Cults%22&f=false. Accessed December 6, 2017.

Callahan, T. (2009). "The Greatest Story Ever Garbled". Skeptic 28 (1). Available online at http://www.skeptic.com/eskeptic/09-02-25/#feature. Accessed December 6, 2017.

Clauss, M. (2001). The Roman cult of Mithras: the god and his mysteries. Taylor & Francis. Available online at http://books.google.com/books?hl=en&lr=&id=PCjFb2nxryEC&oi=fnd&pg=PR7&dq=%22The+Roman+cult+of+Mithras%22&ots=a3v8MtcL3Q&sig=cqDYWJISrJUA5ZeNM0xpbQwsxyc#v=onepage&q=%22The%20Roman%20cult%20of%20Mithras%22&f=false. Accessed December 6, 2017.

Clement of Alexandria. Stromateis 1.21.145.

Cross, F. L., & Livingstone, E. A. (Eds.). (2005). The Oxford dictionary of the Christian church. Oxford University Press. Available online at http://books.google.com/books?id=fUqcAQAAQBAJ&printsec=frontcover&dq=isbn:9780192802903&hl=en&sa=X&ei=8tGFVJmAEpS2oQTEl4K4AQ&ved=0CB8Q6AEwAA#v=onepage&q&f=false. Accessed December 6, 2017.

Duchesne, L. (1919). Christian worship: its origin and evolution: a study of the Latin liturgy up to the time of Charlemagne. Society for promoting Christian knowledge. Available online at http://books.google.com/books?hl=en&lr=&id=WRMvAAAAYAAJ&oi=fnd&pg=PR1&dq=Christian+Worship:+Its+Origin+and+Evolution&ots=kpdhqZ1aBa&sig=j2FHT_ndzZZOWIwvCkUUA8_DyJE#v=onepage&q=Christian%20Worship%3A%20Its%20Origin%20and%20Evolution&f=false. Accessed December 6, 2017.

Encyclopedia Britannica. (2017). Mithra. Available online at http://www.britannica.com/EBchecked/topic/386025/Mithra. Accessed December 6, 2017.

Ehrman, B. D., & Dixon, W. (2012). Did Jesus Exist?: The Historical Argument for Jesus of Nazareth. NYHarperOne, New York. Available online at http://www.amazon.com/Did-Jesus-Exist-Historical-Argument-ebook/dp/B0053K28TS/ref=sr_1_1?ie=UTF8&qid=1421368984&sr=8-1&keywords=Did+Jesus+Exist%3F. Accessed December 6, 2017

Finegan, J. (1964). Handbook of Biblical Chronology: Principles of Tine Reckoning in the Ancient World and Problems of Chronology in the Bible. Princeton: Princeton Univ. Press, pp. 23-29.

Gwynn, D. M. (2011). The 'End of Roman Senatorial Paganism. In The Archaeology of Late Antique'Paganism', Vol. 7. Eds. L. Lavan and M. Mulryan. ISBN 9789004192379 9004192379. Pg. 135. Available online at https://books.google.com/books?id=Nz5z_AsU_jkC&pg=PA140&lpg=PA140&dq=saturnalia+Gwynn&source=bl&ots=49kdVX3Oc8&sig=kBRKS3VkeUyTMjbexCJeJq8mEMA&hl=en&sa=X&ei=-iuGVOCgJc6zoQS34oGYBg&ved=0CEAQ6AEwBQ#v=onepage&q=saturnalia%20Gwynn&f=false. Accessed December 6, 2017.

Harris, S.L. 2010. Understanding the Bible. McGraw-Hill Education; 8 edition (January 20, 2010). ISBN-10: 9780073407449; ISBN-13: 978-0073407449. Online at www.amazon.com/Understanding-Bible-Stephen-Harris/dp/0073407445/ref=sr_1_1?ie=UTF8&qid=1514186342&sr=8-1&keywords=Understanding+the+Bible+harris. Accessed Dec. 9, 2017.

Hattenstone, S. (2003). "Darwin's child". London: The Guardian, Feb. 10, 2003. Available online at http://www.theguardian.com/world/2003/feb/10/religion.scienceandnature. Accessed December 6, 2017.

Joseph, P. (2007). Zeitgeist: The Movie Companion Source Guide. Zeitgeist The Movie. Available online at http://www.zeitgeistmovie.com/Zeitgeist,%20The%20Movie-%20Companion%20Guide%20PDF.pdf. Accessed December 6, 2017.

Justin Martyr. (155-157 C.E.). First Apology. Overview online at http://en.wikipedia.org/wiki/First_Apology_of_Justin_Martyr. Accessed December 6, 2017.

Lesko, B. S. (1999). The great goddesses of Egypt. University of Oklahoma Press. Available online at http://books.google.com/books?hl=en&lr=&id=Mb3F7roWPvsC&oi=fnd&pg=PR9&dq=The+Great+Goddesses+of+Egypt&ots=07OIB3mf5t&sig=qjvgxGBSoDR8z-ybveNLtFRAIag#v=onepage&q=The%20Great%20Goddesses%20of%20Egypt&f=false. Accessed December 6, 2017.

Lindemans, M.F. (1997). Anahita. Encyclopedia Mythica. Available online at http://www.pantheon.org/articles/a/anahita.html. Accessed December 6, 2017.

Martindale, C.C. 1908. Christmas. The Catholic Encyclopedia. Vol. 3. New York: Robert Appleton Company. Available online at http://www.newadvent.org/cathen/03724b.htm. Accessed December 6, 2017.

Meyboom, P. G. (1995). The Nile mosaic of Palestrina: early evidence of Egyptian religion in Italy (Vol. 121). Brill. Available online at https://books.google.com/books?id=jyTFEJ56iTUC&pg=PA72&lpg=PA72&dq=khoiak+calendar+dates&source=bl&ots=FcqPak2SCi&sig=CyfYYCc2ficVWK9ZGAtS94C_tOA&hl=en&sa=X&ei=UN2ZVNDrGonUoAS60IGYAw&ved=0CDQQ6AEwBA#v=onepage&q=khoiak%20calendar%20dates&f=false. Accessed December 6, 2017.

McGowan, A. (2002). How December 25 Became Christmas. Bible Review, 18(6), Pgs. 46-48. Available online at www.biblicalarchaeology.org/daily/biblical-topics/new-testament/how-december-25-became-christmas/. Accessed December 6, 2017.

Nash, R. H. (1984). Christianity and the Hellenistic world. Zondervan. ISBN-10: 0310452104; ISBN-13: 978-0310452102Available online at http://www.amazon.com/Christianity-Hellenistic-World-Bible-Commentary/dp/0310452104/ref=sr_1_1?ie=UTF8&qid=1418601460&sr=8-1&keywords=Christianity+and+the+Hellenistic+World. Accessed December 6, 2017.

Nash, R. H. (1994). Was the New Testament Influenced by Pagan Religions. Christian Research Journal. Available online at http://www.iclnet.org/pub/resources/text/cri/cri-jrnl/web/crj0169a.html. Accessed December 6, 2017.

New York Folklore Society (NYFS) (1973). "New York folklore quarterly" 29. Cornell University Press. p. 294.

Origen of Alexandria. Homily on Leviticus 8.

Pearse, R. (2012). The Roman Cult of Mithras. Tertullian.org. Available online at http://www.tertullian.org/rpearse/mithras/display.php?page=main. Accessed December 6, 2017.

Pearse, R. (2014). Mithras and Christianity. Tertullian.org. Available online at http://www.tertullian.org/rpearse/mithras/display.php?page=mithras_and_christianity. Accessed December 6, 2017.

Plutarch. (1936). Isis and Osiris, in vol. V of the Moralia, tr. Frank Cole Babbitt, Loeb Classical Library, Harvard University Press, 1936.

Renan, E. (1882). Marc-Aurèle et la fin du monde antique. Calmann-Lévy, Paris. p. 579. Available online (in French) at http://books.google.com/books?hl=en&lr=&id=6E2auh5QnD0C&oi=fnd&pg=PP7&dq=%22Marc-Aurele+et+la+fin+du+monde+antique%22&ots=r4CYIpIdhk&sig=OzF80ZU4XJNBCUbsZ_-5yzH7y0c#v=onepage&q=%22Marc-Aurele%20et%20la%20fin%20du%20monde%20antique%22&f=false. Accessed December 6, 2017.

Roll, S.K. (1995). Towards the Origin of Christmas. (Kok Pharos Publishing 1995 ISBN 90-390-0531-1) p. 82, cf. note 115. Available online at http://books.google.com/books?id=6MXPEMbpjoAC&pg=PA82&dq=Roll+%22appropriate+that+Christ%22&hl=en&sa=X&ei=-S_LUJ3LFc22hAeluIGADg&redir_esc=y#v=onepage&q=Roll%20%22appropriate%20that%20Christ%22&f=false. Accessed December 6, 2017.

Rothenberg, D. J. (2011). The Flower of Paradise: Marian Devotion and Secular Song in Medieval and Renaissance Music. Oxford University Press. Available online at http://books.google.com/books?hl=en&lr=&id=XkVpAgAAQBAJ&oi=fnd&pg=PP1&dq=The+Flower+of+Paradise&ots=UKr8UFOyYa&sig=hsC7RZNsgrdyKI20DfIO7fbaFfU#v=onepage&q=The%20Flower%20of%20Paradise&f=false. Accessed December 6, 2017.

Roy, C. (2005). Traditional Festivals, Vol. 2 [M-Z]: A Multicultural Encyclopedia (Vol. 1). ABC-CLIO. Pg. 146. Available online at http://books.google.com/books?hl=en&lr=&id=IKqOUfqt4cIC&oi=fnd&pg=PR7&dq=Traditional+Festivals:+A+Multicultural+Encyclopedia&ots=6tY88ts9EK&sig=eWI_mJ1Sr5GRL12P6uD2zXkS-SM#v=onepage&q=Traditional%20Festivals%3A%20A%20Multicultural%20Encyclopedia&f=false. Accessed December 6, 2017.

Salusbury, M. (2009). Did the Romans Invent Christmas? History Today, 59 (12). Available online at http://www.historytoday.com/matt-salusbury/did-romans-invent-christmas. Accessed December 6, 2017.

Senn, F. C. (2006). The People's Work: A Social History of the Liturgy. Fortress Press. p.72. Available online at http://books.google.com/books?id=WcYG2j0jFjQC&pg=PA72&dq=Christmas+Nisan+world&hl=en&sa=X&ei=vb0XVMS1Jo7b7Abo84HwBA&ved=0CE8Q6AEwBg#v=onepage&q=Christmas%20Nisan%20world&f=false. Accessed December 6, 2017.

Senn, F. C. (2012). Introduction to Christian Liturgy. Fortress Press. p. 114. Available online at http://www.amazon.com/Introduction-Christian-Liturgy-Frank-Senn/dp/0800698851/ref=sr_1_1?s=books&ie=UTF8&qid=1418056980&sr=1-1&keywords=9780800698850. Accessed December 6, 2017.

Scholz, P.O. (2001). Eunuchs and castrati: a cultural history. Markus Wiener Publishers. p. 32. ISBN 1-55876-201-9.

Shaw, I. (2003). The Oxford History of Ancient Egypt. Oxford University Press. ISBN 0-19-815034-2.

Talley, T. J. (1986). The origins of the liturgical year. New York: Pueblo Publishing Company, ISBN-10: 0814660754; ISBN-13: 978-0814660751. Available online at http://www.amazon.com/Origins-Liturgical-Year-Second-Emended/dp/0814660754/ref=sr_1_1?ie=UTF8&qid=1418049622&sr=8-1&keywords=origins+of+the+liturgical+year. Accessed December 6, 2017.

Tertullian of Carthage. Adversus Iudaeos 8.

Tighe, W.J. (2003). Calculating Christmas: The Story Behind December 25. Touchstone Journal, Dec. 2003. Available online at http://touchstonemag.com/archives/article.php?id=16-10-012-v. Accessed December 6, 2017.

Traunecker, C. (2001). The gods of Egypt. Cornell University Press. Available online at http://books.google.com/books?hl=en&lr=&id=y78zDGDCUjkC&oi=fnd&pg=PR7&dq=The+Gods+of+Egypt&ots=JceDGSVv37&sig=-fj_j8oC6J3ASyUa3vtZwKmCORs#v=onepage&q=The%20Gods%20of%20Egypt&f=false. Accessed December 6, 2017.

Truth Be Known. (2017). Personal website of Dorothy M. Murdoch (Acharya S). Available online at www.truthbeknown.com. Subpages with Murdoch’s bio and credentials are at http://www.truthbeknown.com/author.html and http://www.truthbeknown.com/credentials.html respectively. Accessed December 6, 2017.

Ulansey, D. (1991). The origins of the Mithraic mysteries: Cosmology and salvation in the ancient world. Oxford University Press. Available online at http://books.google.com/books?hl=en&lr=&id=25_SOWldSUUC&oi=fnd&pg=PA3&dq=The+Origins+of+the+Mithraic+Mysteries:+Cosmology+and+Salvation+in+the+Ancient+World&ots=N49iON72KU&sig=ZzVNyHACTOgKs0RGncsydXq-Cbw#v=onepage&q=The%20Origins%20of%20the%20Mithraic%20Mysteries%3A%20Cosmology%20and%20Salvation%20in%20the%20Ancient%20World&f=false. Accessed December 6, 2017.

Vermaseren, M. J. (1951). The miraculous birth of Mithras. Mnemosyne, Fourth Series, Vol. 4, Fasc. 3/4 (1951), pp. 285-301. Available online at http://www.jstor.org/stable/4427315?seq=1. Accessed December 6, 2017.

Wainwright, G., & Tucker, K. B. W. (Eds.). (2006). The Oxford history of Christian worship. Oxford University Press. Pg. 65. Available online at http://books.google.com/books?hl=en&lr=&id=h5VQUdZhx1gC&oi=fnd&pg=PR5&dq=The+Oxford+History+of+Christian+Worship&ots=z7IETfcXtd&sig=aAF-LwH9eecVFdziSEhiZq8EVw8#v=onepage&q=The%20Oxford%20History%20of%20Christian%20Worship&f=false. Accessed December 6, 2017.

Widengren, G. (1966). The Mithraic Mysteries in the Graeco-Roman World with Special Regard to their Iranian background. La Persia e il mondo grecoromano Accad. Naz. dei Lincei 76, pp. 444-45; I. M. Diakonoff, Phyrgian (Delmar, N.Y., 1985).

Wikipedia (2017). Sol Invictus: Sol Invictus and Christianity and Judaism. Available online at http://en.wikipedia.org/wiki/Sol_Invictus#Sol_Invictus_and_Christianity_and_Judaism. Accessed December 6, 2017.

Wikipedia (2017b). Persecution of Christians: 2nd Century to Constantine. Available online at http://en.wikipedia.org/wiki/Persecution_of_Christians#Persecution_from_the_2nd_century_to_Constantine. Accessed December 6, 2017.

Wikipedia (2017c). Mithra. Available online at http://en.wikipedia.org/wiki/Mithra. Accessed December 6, 2017.

Wikipedia (2017d). Avesta. Available online at http://en.wikipedia.org/wiki/Avesta. Accessed December 6, 2017.

Wikipedia (2017e). Mithraic Mysteries. Available online at http://en.wikipedia.org/wiki/Mithraic_mysteries. Accessed December 6, 2017.

Wikipedia (2017f). Mithras in comparison with other belief systems: Mithraism and Christianity. Available online at http://en.wikipedia.org/wiki/Mithras_in_comparison_with_other_belief_systems#Mithraism_and_Christianity. Accessed December 6, 2017.

Wikipedia (2017g). Egyptian Calendar. Coptic Calendar. Available online at http://en.wikipedia.org/wiki/Egyptian_calendar with additional material on the Coptic Calendar at http://en.wikipedia.org/wiki/Coptic_calendar. Accessed December 6, 2017.

Wikipedia (2017h). Horus. Available online at http://en.wikipedia.org/wiki/Horus. Accessed December 6, 2017.

Wikipedia (2017i). Christmas Tree. Available online at http://en.wikipedia.org/wiki/Christmas_tree. Accessed December 6, 2017.

Wikipedia (2017j). Zeitgeist (film series). Available online at http://en.wikipedia.org/wiki/Zeitgeist_(film_series). Accessed December 6, 2017.

Wikipedia (2017k). Christ myth theory. Available online at http://en.wikipedia.org/wiki/Christ_myth_theory. Accessed December 6, 2017.

Wikipedia (2017m). Benito Mussolini: Religious Views. Available online at http://en.wikipedia.org/wiki/Benito_Mussolini#Religious_views. Accessed December 6, 2017.

Wikipedia (2017n). Adolf Hitler: Religious Views. Available online at http://en.wikipedia.org/wiki/Adolf_Hitler#Religious_views. Accessed December 6, 2017.

Wikipedia (2017o). Theosophical Society. Available online at http://en.wikipedia.org/wiki/Theosophical_Society. Accessed December 6, 2017.

Wikipedia (2017p). Helena Blavatsky. Available online at http://en.wikipedia.org/wiki/Helena_Blavatsky. Accessed December 6, 2017.

Wikipedia (2017q). Acharya S. Available online at http://en.wikipedia.org/wiki/Acharya_S. Accessed December 6, 2017.

Winston, E. L. (2007). Zeitgeist – The Movie Debunked. Skeptic Project Online, Nov. 29, 2007. Available online at http://conspiracies.skepticproject.com/articles/zeitgeist/. Accessed December 6, 2017.

Wybrew, H. (1997). Orthodox Feasts of Jesus Christ & the Virgin Mary: Liturgical Texts With Commentary. St Vladimir's Seminary Press. ISBN 978-0-88141203-1; ISBN-13: 978-0881412031. Available online at http://www.amazon.com/Orthodox-Feasts-Jesus-Christ-Virgin/dp/0881412031/ref=sr_1_1?ie=UTF8&qid=1418062432&sr=8-1&keywords=Orthodox+Feasts+of+Jesus+Christ+%26+the+Virgin+Mary. Accessed December 6, 2017.